A toy model of video generative models -- bottleneck dimension controls "classical"/"quantum" strategies

Author: Ziming Liu (刘子鸣)

Motivation

I am interested in whether video generative models have truly learned “physics” (or at least intuitive physics) from videos. However, verifying this claim is difficult with natural videos, where the ground-truth “physics” is unknown. It is therefore more reasonable to start with synthetic datasets, where the underlying physics is fully controlled and known.

Problem setup

I deliberately keep the algorithmic choices as simple as possible.

Dataset

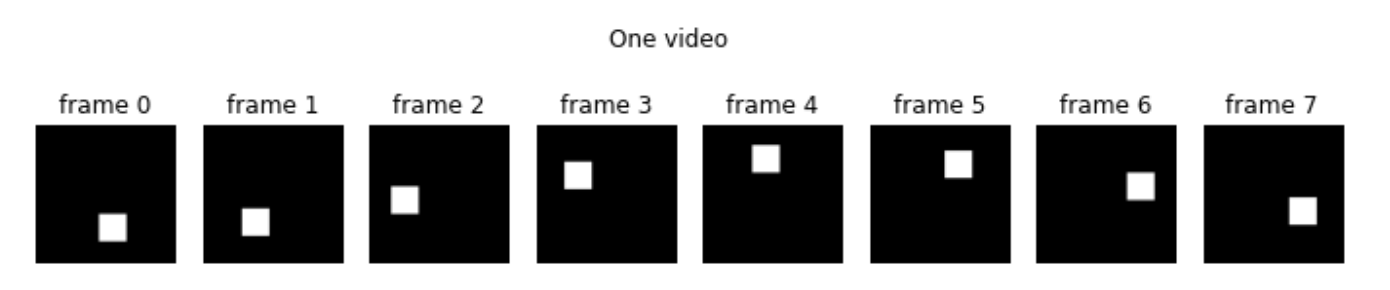

Each frame is a grayscale image containing a square. The center of the square follows a circular trajectory. The radius is randomly sampled, the starting angle is randomly sampled, and the rotation direction (clockwise or counter-clockwise) is also randomly sampled. The angular velocity is fixed at 45 degrees per frame, and each video consists of 8 frames.

Model

We adopt the Stable Diffusion framework. An autoencoder compresses each image into a latent vector \(z\in\mathbb{R}^{d_l}\) (where \(d_l\) is referred to as the embedding dimension below). Both the encoder and the decoder are CNNs.

A video is then compressed into latents. We flatten the latent vectors of individual frames into a single video latent. I use flow matching to generate the video latent.

Even with this simple setup, there are still many hyperparameters that could be tuned. In this blog, I focus specifically on the role of the embedding dimension.

Small embed dim = “Classical”/”Physical” vs Large embed dim = “Quantum”/”Neural”

One might expect that an embedding dimension of 2 should suffice, since the true degrees of freedom of the square images are only two: the \(x\) and \(y\) coordinates. Indeed, we find that embed dim = 2 works, and embed dim = 100 also works (as expected). However, the two cases appear to use very different algorithms internally.

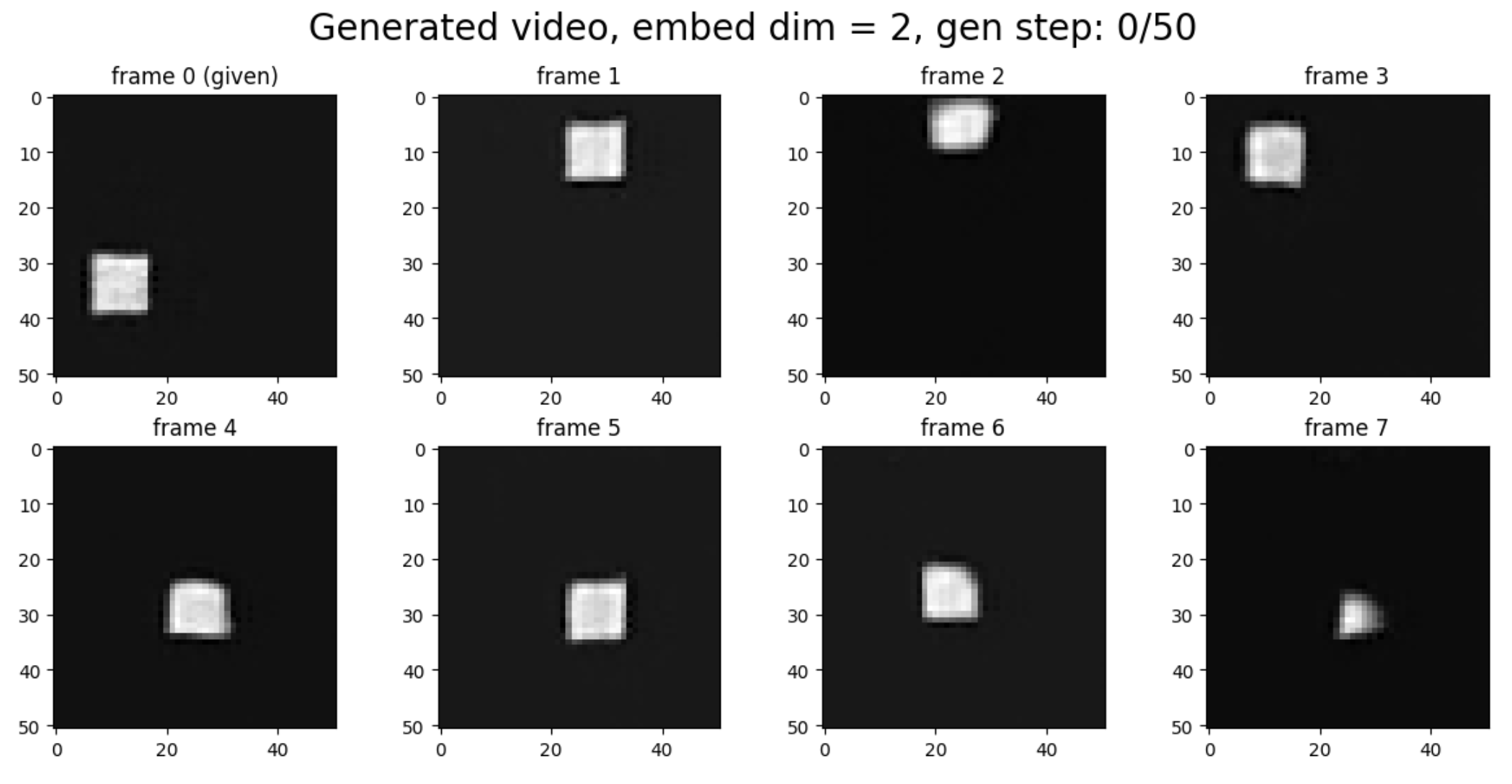

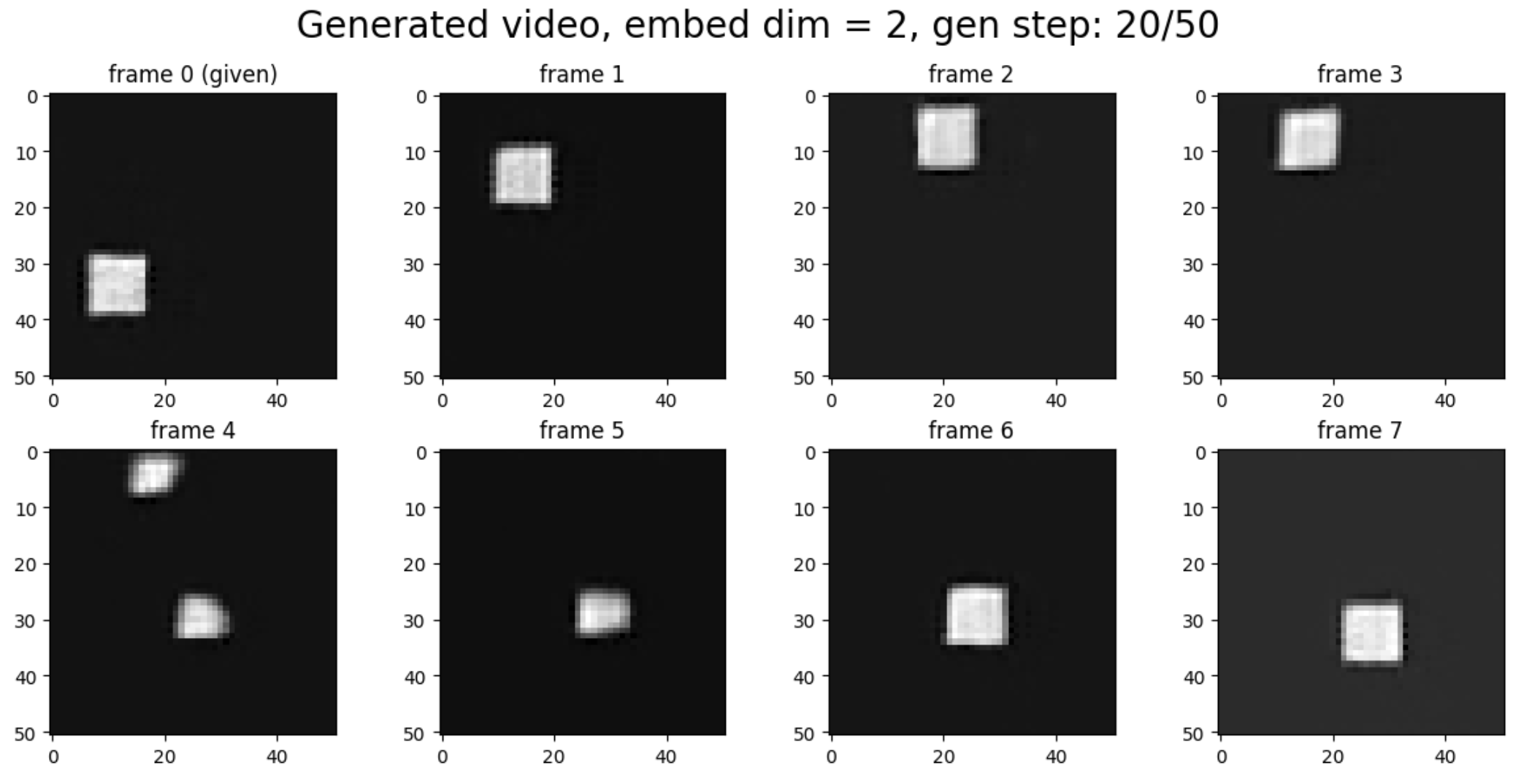

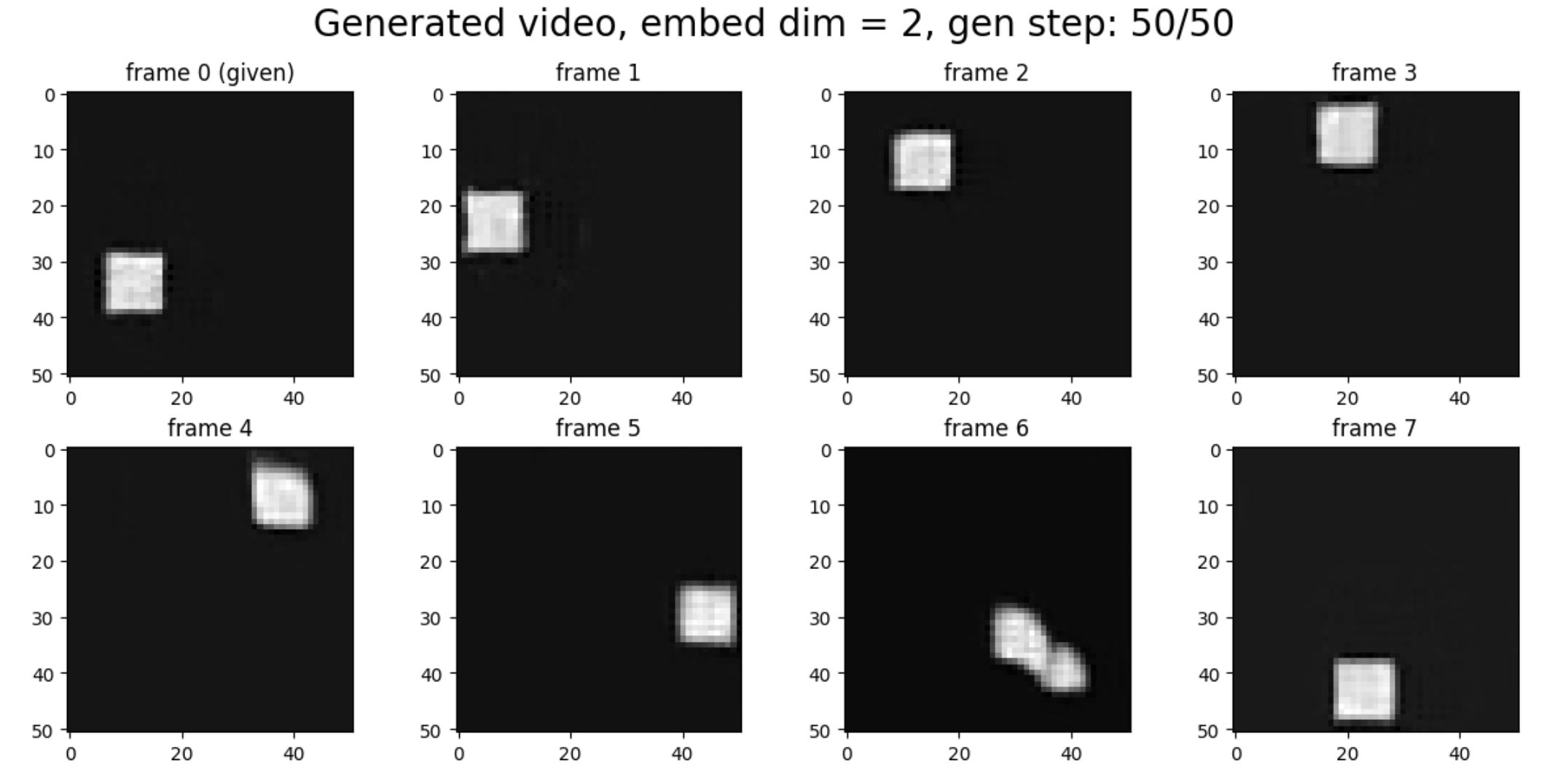

In the experiments below, we provide the first frame and generate the remaining frames.

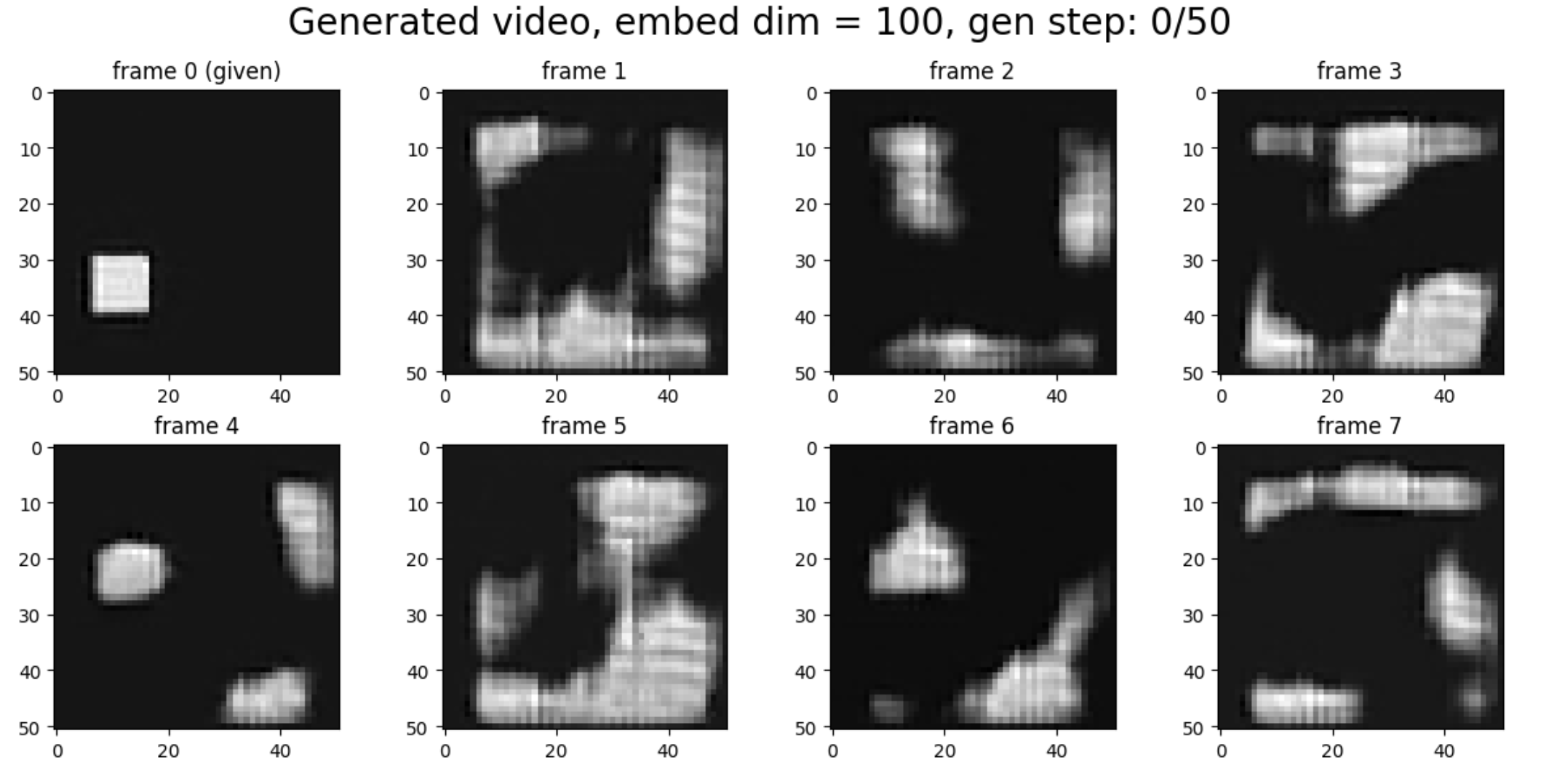

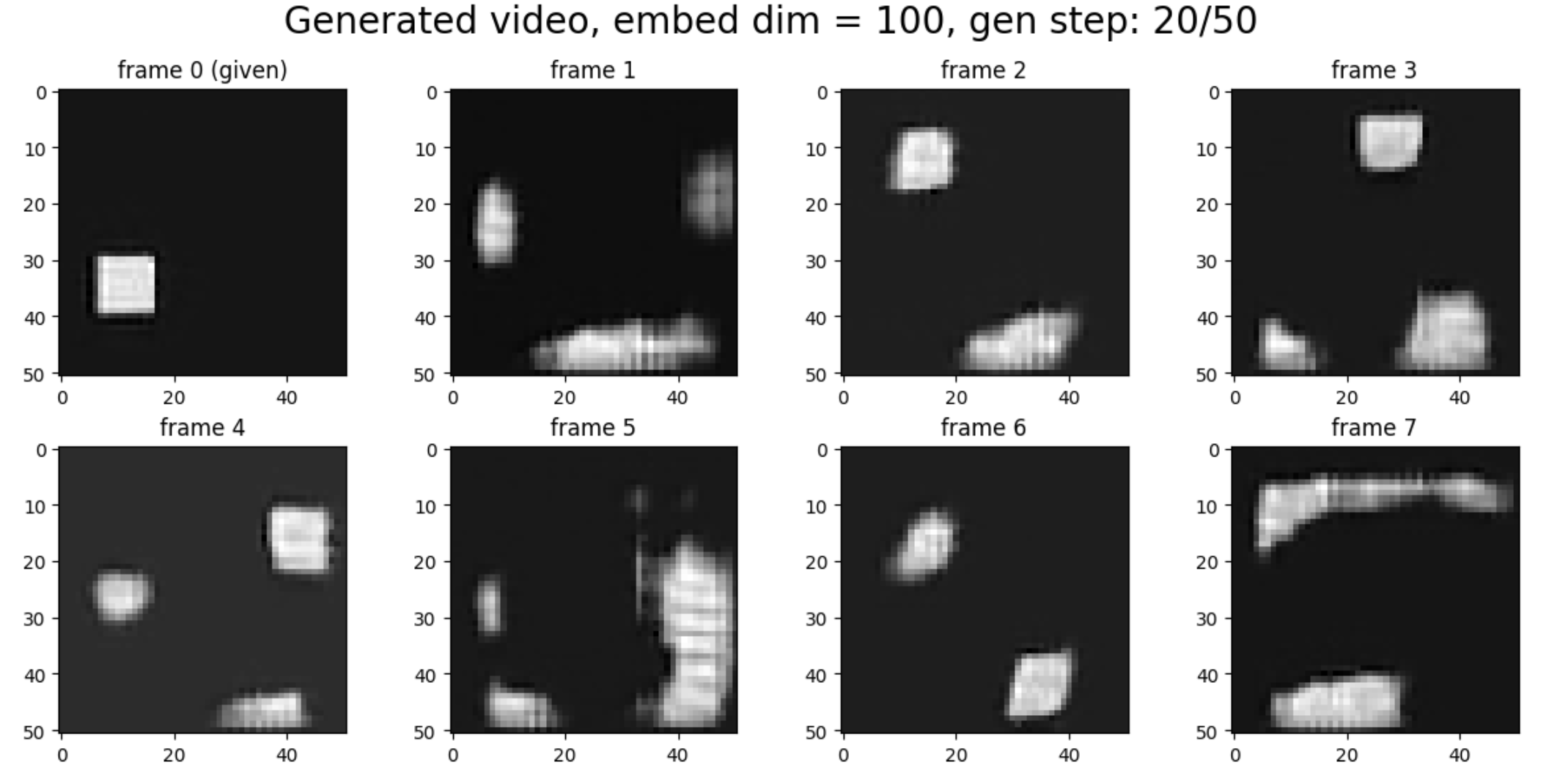

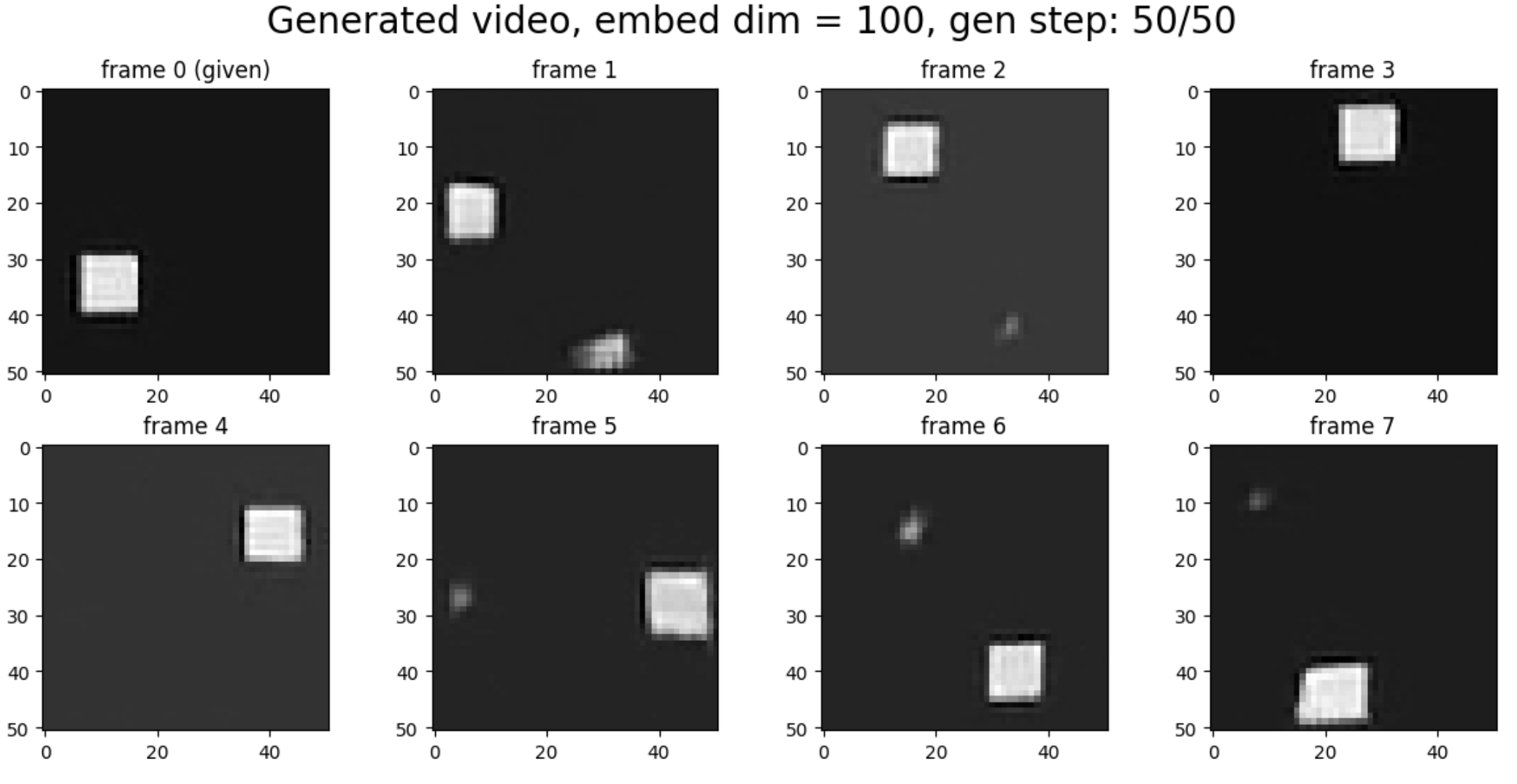

As we will show, embed dim = 2 behaves more like classical mechanics: there is always exactly one square (ignoring minor imperfections during learning), even at early inference steps. The motion happens directly in physical space.

In contrast, embed dim = 100 behaves more like quantum mechanics or place-cell representations in neuroscience. In physics, position is represented explicitly by 2D coordinates. In the brain, however, position may be represented by the firing rates of many place cells. In this representation, the “one-square constraint” can be temporarily broken. We hypothesize that the neural code leverages this flexibility to represent probability distributions during intermediate sampling steps.

In particular, when embed dim = 100 and sampling step = 20 (out of 50), frame 1/2 appears to represent both possibilities simultaneously (the square in frame 0 moving either clockwise or counter-clockwise).

Embed dim = 2

Embed dim = 100

Representation geometry

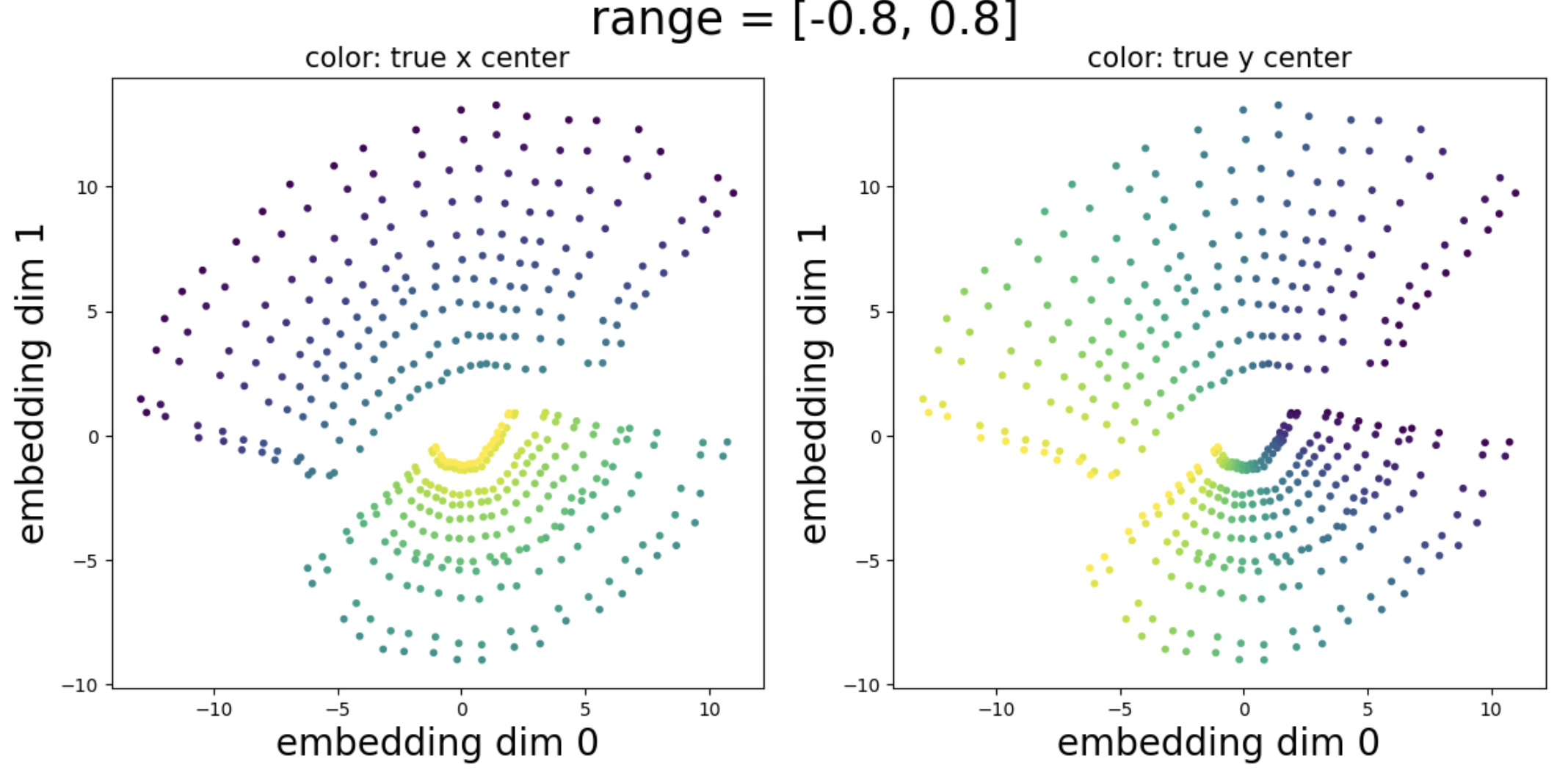

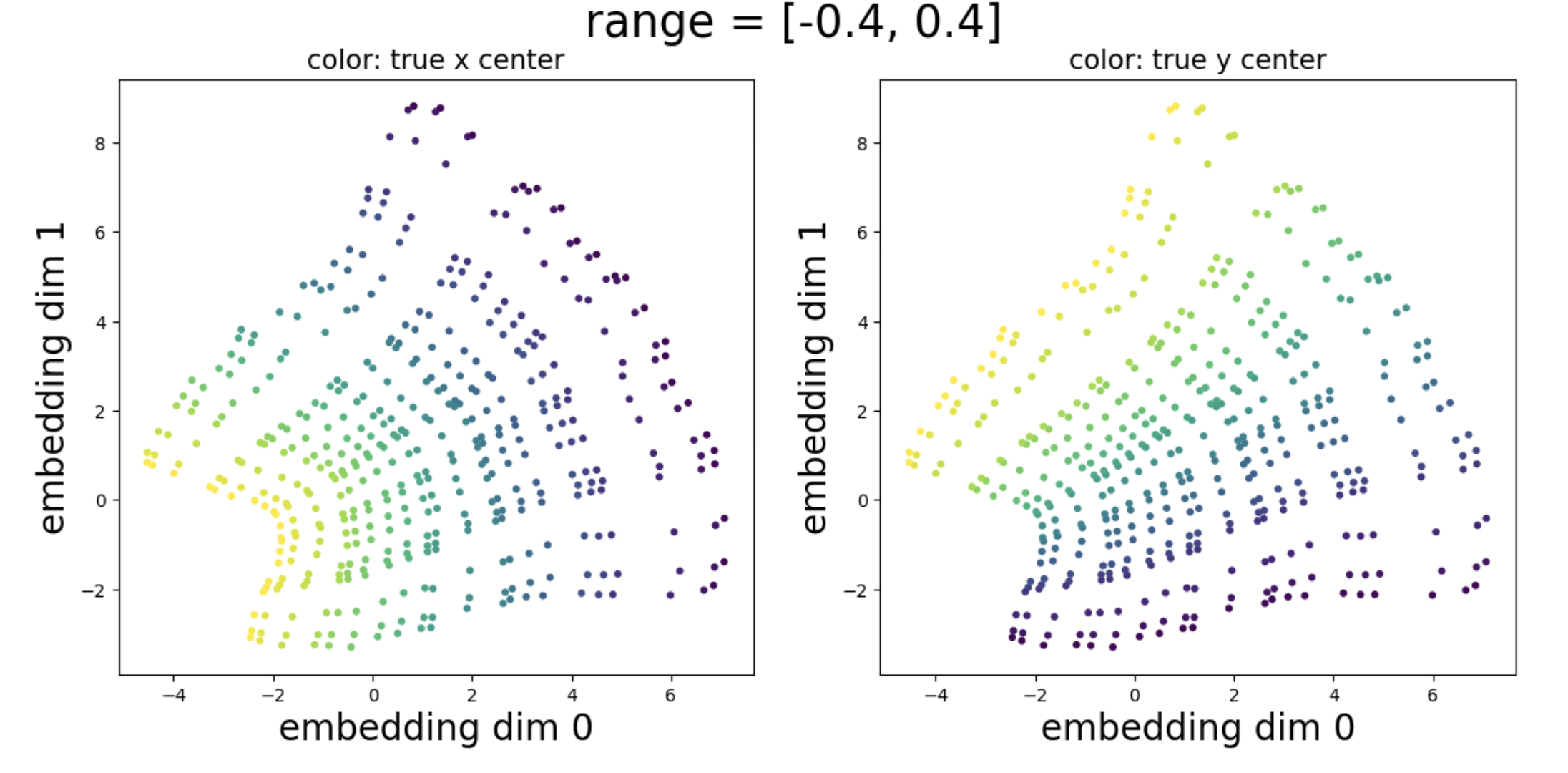

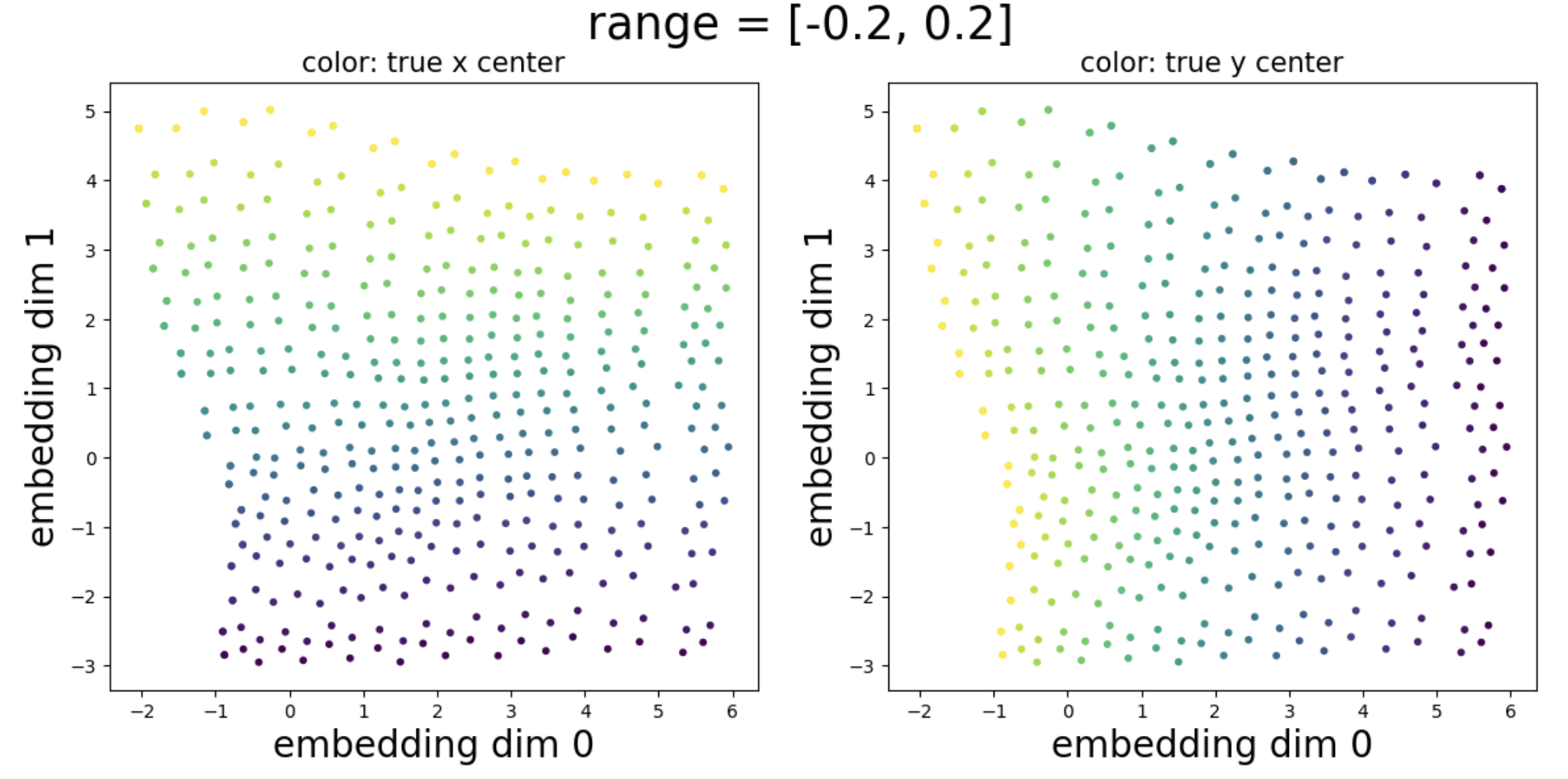

One might expect that if we construct a grid of images (where each grid point corresponds to a square located at that position), then the latent space would form a corresponding grid structure (embedding dimension is set to 2). However, this does not occur trivially.

More experiments are needed, but I find that the latent geometry seems to depend on the overlap between nearby images. When I vary the range of square centers, I observe different geometric structures emerging in the latent space: range = 0.2 has nice grid structure; range = 0.4 has a warped grid structure; range = 0.8 has two sections and appear to be somewhat singular.

Code

Google Colab notebook available here.

Citation

If you find this article useful, please cite it as:

BibTeX:

@article{liu2026toy-vgm,

title={A toy model of video generative models -- bottleneck dimension controls "classical"/"quantum" strategies},

author={Liu, Ziming},

year={2026},

month={March},

url={https://KindXiaoming.github.io/blog/2026/toy-vgm/}

}

Enjoy Reading This Article?

Here are some more articles you might like to read next: