When does RandOpt work?

Author: Ziming Liu (刘子鸣)

Motivation

A recent paper titled “Neural Thickets: Diverse Task Experts Are Dense Around Pretrained Weights” caught my attention. In this work, the authors propose a new optimization method called RandOpt, which simply perturbs pretrained models many times and ensembles the top-K solutions. Surprisingly, RandOpt can achieve better post-training performance than gradient-based methods.

The authors also provide a toy model to illustrate the idea, which I find quite elegant. However, I still want to build my own toy model for several reasons:

- “Learning by building”: constructing toy models is my way of internalizing knowledge

- I believe an even simpler setup exists, where I can control the system better and perform deeper mechanistic analysis

- In the spirit of the No-Free-Lunch theorem, I want to understand when RandOpt works (better than gradient-based methods such as Adam) and when it stops working

Problem setup

My intuition is that sequence length is an important factor. When the sequence is long, the loss landscape becomes quite rugged, which may render gradient-based methods ineffective. This intuition is similar to why RNNs are difficult to train with gradient-based optimization, especially over long sequences.

Dataset

Although the official toy model uses multiple mixed datasets (sine wave, square wave, sawtooth wave, etc.), I find this still too complicated for my purposes. Instead, I only use the sine wave dataset for pre-training and use a single trajectory as the post-training dataset.

The time interval between two nearby points is \(\Delta t = 0.05\). The full sequence therefore contains \(t_{\rm max}/\Delta t\) points. Training sequences only have \(t_{\rm max}=1\), while we fine-tune the model to perform well on longer test sequences (\(t_{\rm max}=1,2,5,10,20\)).

Model

Similar to the official toy model, I use an MLP to map the \(k\) most recent states to the next state. During “pre-training”, the loss is the one-step generation MSE. During “post-training”, the MSE loss is defined over the entire sequence generated autoregressively, except for the first \(k\) conditioning points.

Optimization

We compare two optimization methods: Adam and RandOpt.

Results

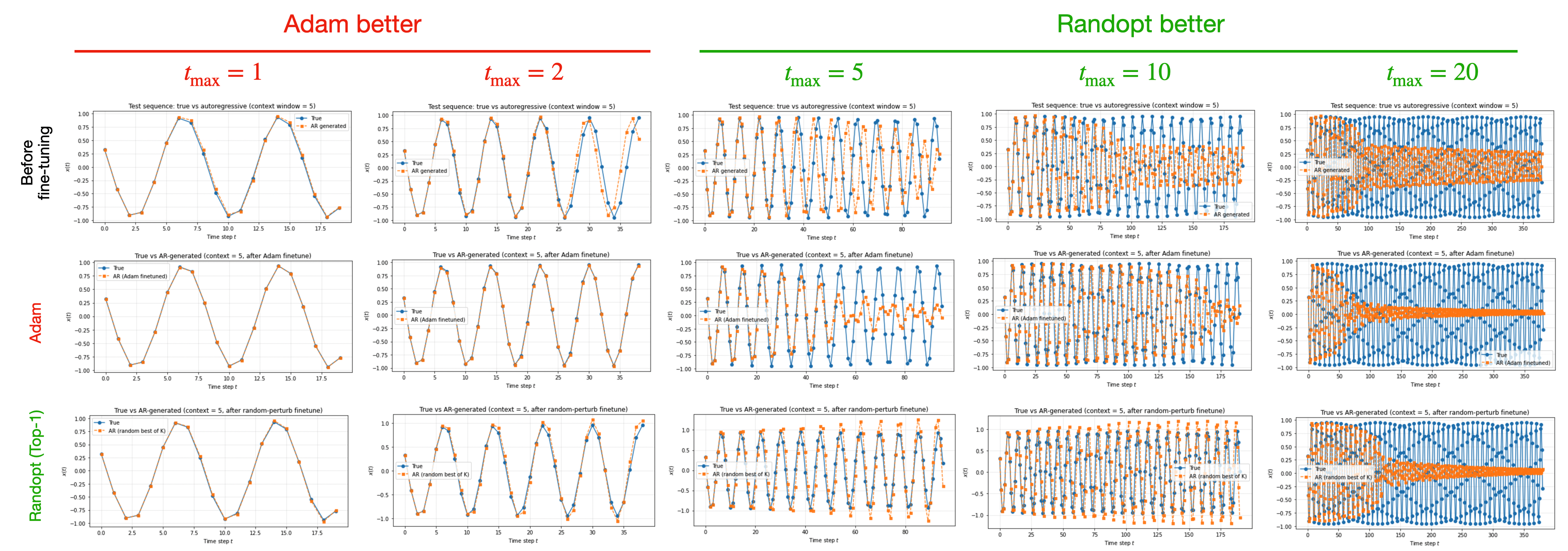

Longer sequences favor RandOpt

As we can see, Adam performs better when \(t_{\rm max}=1,2\), while RandOpt performs better when \(t_{\rm max}=5,10,20\). One possible explanation is:

- For shorter sequences, the post-training landscape is well behaved because the pretrained weights are already close to the global minimum (which may be locally approximated by a quadratic function, where gradients are very informative).

- For longer sequences, Adam appears to adopt a more conservative strategy. The generated output tends to stay close to zero because it is “safer” (producing a lower loss on average) than predicting a non-zero value. Regardless of whether this conservative strategy corresponds to a local minimum or a saddle point in the loss landscape, gradient-based methods begin to lose effectiveness.

First and Last layers matter

To understand which layer’s perturbation matters the most, we perturb only one layer of the MLP at a time. Our MLP has three layers, so there are four modes: perturb all layers, only the first, only the second, or only the third. The results are shown in the table below.

| $$t_{\rm max} = 1$$ | $$t_{\rm max} = 2$$ | $$t_{\rm max} = 5$$ | $$t_{\rm max} = 10$$ | $$t_{\rm max} = 20$$ | |

|---|---|---|---|---|---|

| before fine-tune | $$1.6\times 10^{-3}$$ | $$0.051$$ | $$0.563$$ | $$0.550$$ | $$0.525$$ |

| Adam | $$1.1\times 10^{-5}$$ | $$2.5\times 10^{-4}$$ | $$0.234$$ | $$0.216$$ | $$0.395$$ |

| RandOpt (all) | $$3.6\times 10^{-4}$$ | $$8.3\times 10^{-3}$$ | $$0.081$$ | $$0.206$$ | $$0.364$$ |

| RandOpt (first) | $$8.9\times 10^{-4}$$ | $$5.3\times 10^{-3}$$ | $$0.178$$ | $$0.224$$ | $$0.391$$ |

| RandOpt (second) | $$9.5\times 10^{-4}$$ | $$6.4\times 10^{-3}$$ | $$0.463$$ | $$0.484$$ | $$0.484$$ |

| RandOpt (third) | $$8.8\times 10^{-4}$$ | $$4.6\times 10^{-3}$$ | $$0.124$$ | $$0.392$$ | $$0.429$$ |

It appears that the first layer and the third (last) layer have the largest effects. While more experiments are needed before drawing firm conclusions, here are some hypotheses:

- The first layer may be in the lazy learning regime, where feature learning via gradients is inefficient. RandOpt may enable more effective feature discovery through random perturbation.

- The third (last) layer may be pruned too aggressively during post-training by Adam (due to the conservative strategy), eliminating too many features. RandOpt may avoid this kind of feature collapse (this still needs to be verified).

Note that the goal of this blog post is to establish a starting point for systematic testing. Although I did not intentionally cherry-pick results, the outcomes can be quite sensitive to random seeds (for both Adam and RandOpt; I realize this when I transfer my code from local to google colab and find the results change). In addition, the learning rate is crucial for Adam, while \(\sigma\) (the noise scale) is the key hyperparameter for RandOpt. The tuning of these hyperparameters is not systematically explored in this blog.

Code

Google Colab notebook available here.

Citation

If you find this article useful, please cite it as:

BibTeX:

@article{liu2026RandOpt,

title={When does RandOpt work?},

author={Liu, Ziming},

year={2026},

month={March},

url={https://KindXiaoming.github.io/blog/2026/randopt/}

}

Enjoy Reading This Article?

Here are some more articles you might like to read next: