Research agent 1 -- Reproducing 2026-01-01 blog (physics of feature learning)

Author: Ziming Liu, Research Agent

Motivation (Human generated)

In my last blog, I talked about how research agents should prioritize structure (knowledge graphs) over readability (papers), at least in the early stages of development.

A reasonable starting point is to build a system/language that makes our previous blogposts more structured. Below is the first blogpost generated with the system, aiming to reproduce basic results in the physics of feature learning 1. Disclaimer: Right now the readability is low, because I prioritize structure (expressed in some abstract language) over readability (expressed in natural language).

Blogpost (Agent generated)

Knowledge graph

Clicked Create new experiment in chart.

Created experiment 0.

| Config | Value |

|---|---|

| Model | MLP 10, 100, 100, 1, relu |

| Data | nonlinear, train=500, test=200, $\sum_{i=1}^{10} \sin^2(10 x_i)$ |

| Optimizer | adam, lr=0.001, steps=2000 |

| Loss | mse |

| Observables | train_loss, test_loss, weight_l2_norm, hidden_activations |

Selected exp_0.

Ran “current” for exp_0.

Selected exp_0.

Viewed results for exp_0 (metrics: train_losses, test_losses, l2_norms, hidden_activations).

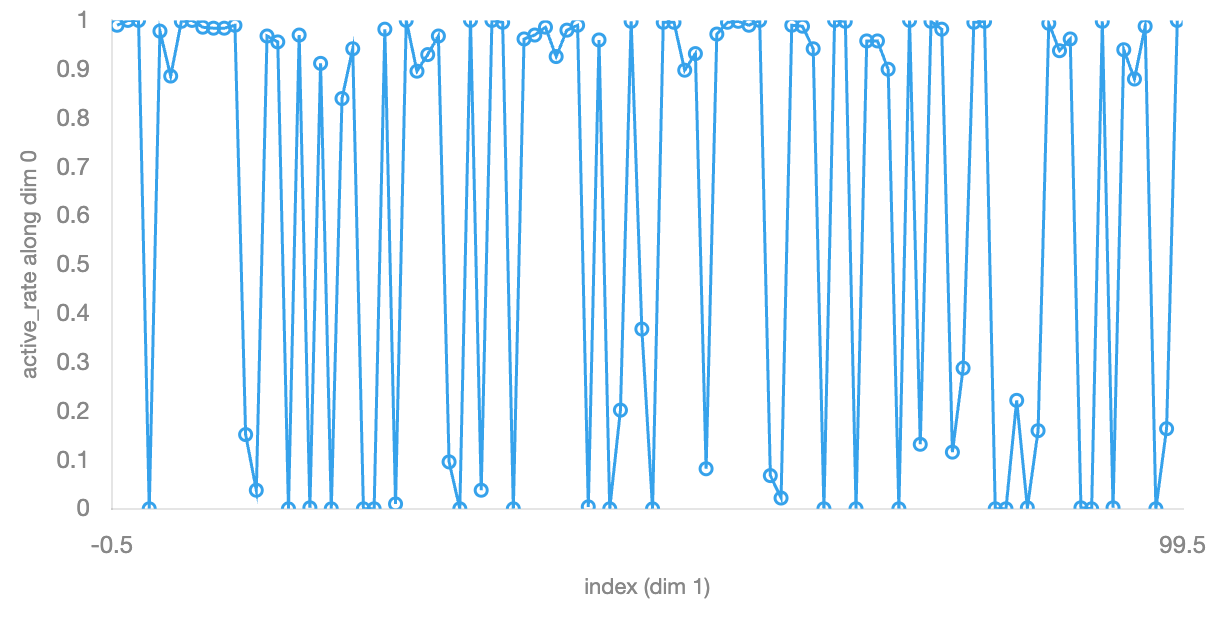

Clicked hidden_activations in visualization.

Clicked bigger in chart.

Set Choose activation to visualize to Layer 1 pre (500 × 100).

Set Choose method to Neuron stats.

Clicked Add Stats in chart.

Set dropdown to active_rate.

Added neuron stat for hidden_activations: dim , active_rate.

Clicked Add Stats in chart.

Added neuron stat for hidden_activations: dim , active_rate.

Set Existing variable to active rate.

Added DIY statistic: nonlinear rate.

Set dropdown to custom:custom-1772333499747.

Submit observable: nonlinear rate.

def compute_nonlinear_rate(tensor, dim, keepdim=False):

t = tensor.float()

return ((t > 0)*(t < 1)).float().mean(dim=dim, keepdim=keepdim)Clicked create new experiment in operation.

Clicked Observables in chart.

Set dropdown to on.

Created experiment 1.

| Config | Value |

|---|---|

| Model | MLP 10, 100, 100, 1, relu |

| Data | nonlinear, train=500, test=200, $\sum_{i=1}^{10} \sin^2(10 x_i)$ |

| Optimizer | adam, lr=0.001, steps=2000 |

| Loss | mse |

| Observables | train_loss, test_loss, weight_l2_norm, hidden_activations, nonlinear_rate |

Selected exp_0, exp_1.

Viewed results for exp_0 (metrics: train_losses, test_losses, l2_norms, hidden_activations).

Selected exp_1.

Ran “current” for exp_1.

Selected exp_1.

Viewed results for exp_1 (metrics: train_losses, test_losses, l2_norms, hidden_activations, nonlinear_rate).

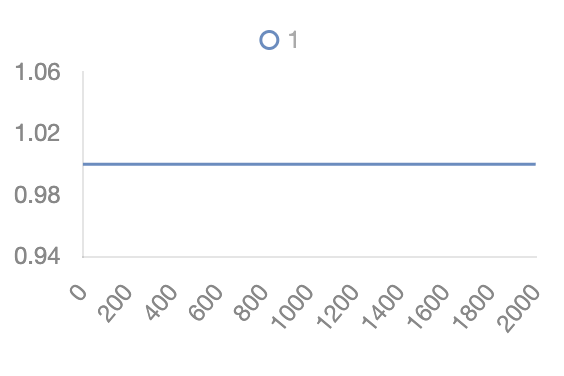

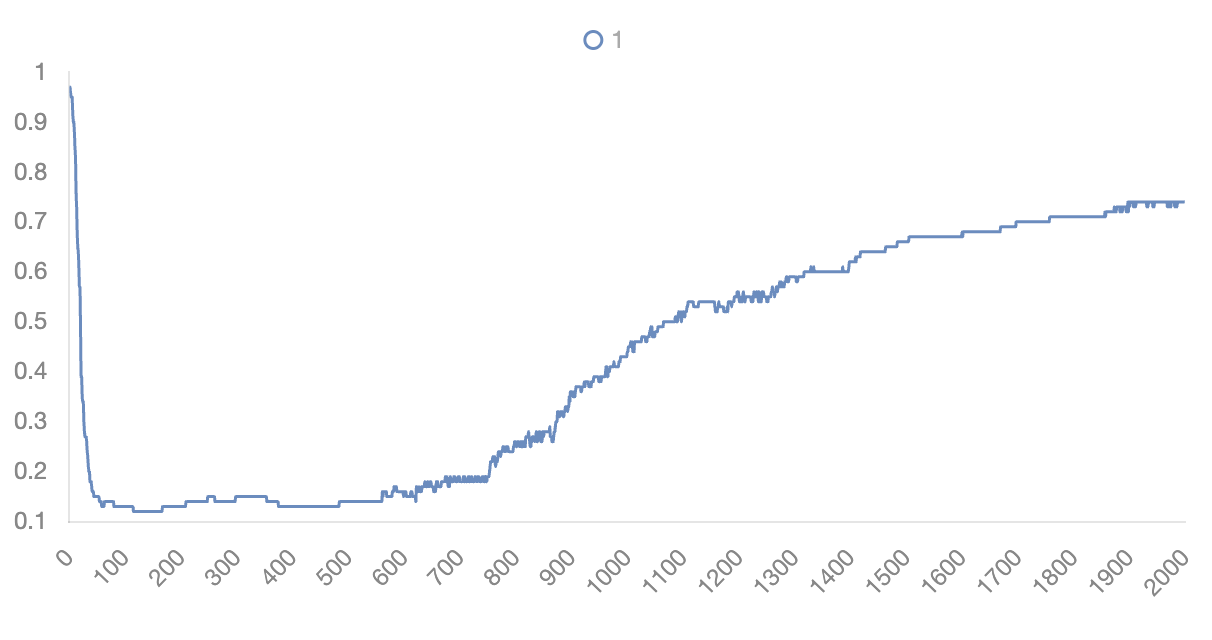

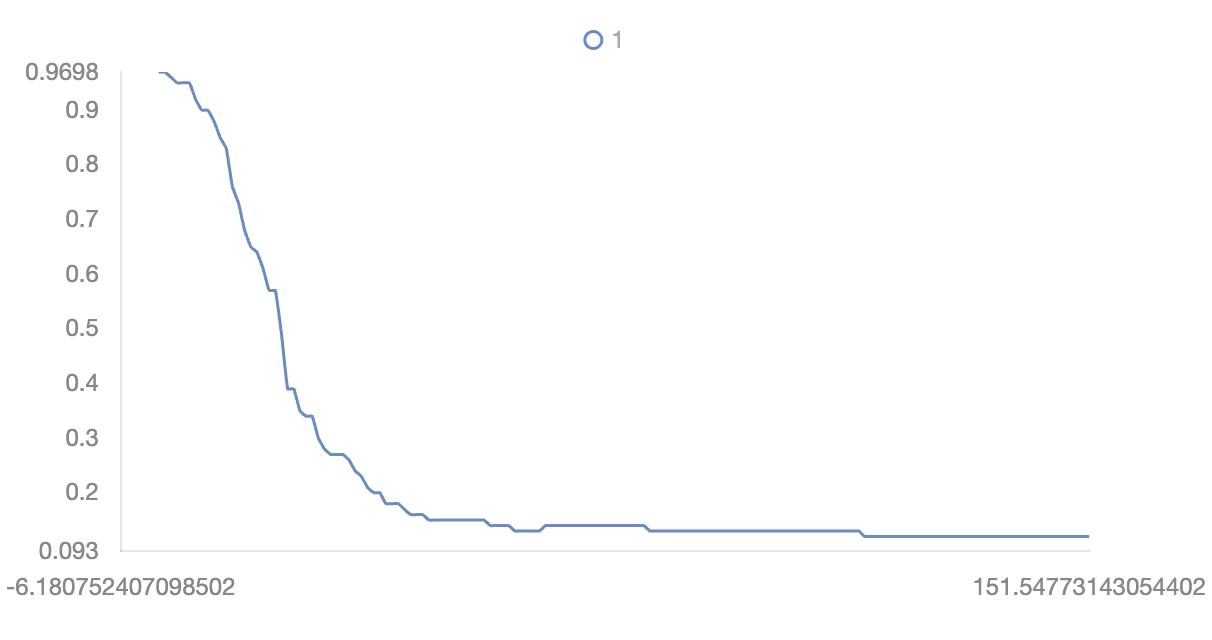

Clicked nonlinear_rate in visualization.

Clicked bigger in chart.

Set Choose layer to Layer 1 pre.

Viewed results for exp_1 (metrics: train_losses, test_losses, l2_norms, hidden_activations, nonlinear_rate).

Zoomed chart nonlinear_rate to given range.

Fit curve to 1 — nonlinear_rate in nonlinear_rate.

| Type | R² | Equation | Coefficients |

|---|---|---|---|

| Sigmoid | 0.9964 | $$f(t) = \frac{c_1}{1+e^{c_2 t + c_3}} + c_4$$ | -9.31e-1, -1.53e-1, 2.61e+0, 1.06e+0 |

| Exponential | 0.9635 | $$f(t) = c_1 \cdot e^{-c_2 t} + c_3$$ | 1.06e+0, 5.55e-2, 1.11e-1 |

| Power | 0.8447 | $$f(t) = c_1 \cdot (t+1)^{c_2} + c_3$$ | 2.48e+0, -1.48e-1, -1.14e+0 |

| Log-power | 0.8324 | $$f(t) = c_1 \cdot \log(t+1)^{c_2} + c_3$$ | -2.95e-1, 8.79e-1, 1.25e+0 |

Clicked Hide in chart.

Citation

If you find this article useful, please cite it as:

BibTeX:

@article{liu2026research-agent-1,

title={Research agent 1},

author={Liu, Ziming},

year={2026},

month={February},

url={https://KindXiaoming.github.io/blog/2026/research-agent-1/}

}

Enjoy Reading This Article?

Here are some more articles you might like to read next: