When does Muon work? Model depth is a key factor

Author: Ziming Liu (刘子鸣)

Motivation

The Muon optimizer has recently shown great promise in LLM training. However, the no-free-lunch theorem suggests that there must exist conditions under which Muon either works well or fails (relative to Adam). The goal of this blog is to make a first attempt at understanding when Muon works.

Problem setup

Model

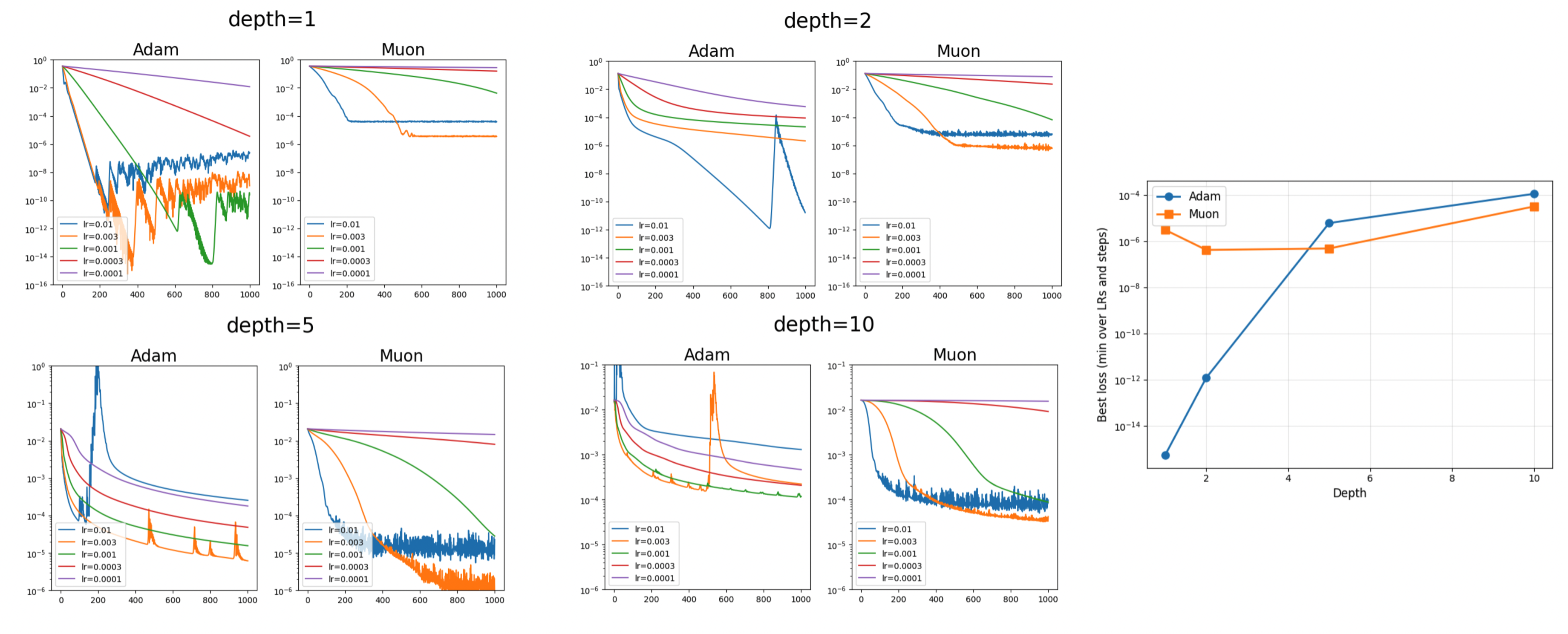

I use an MLP with either linear activations or SiLU activations. We sweep the depth of the network: 1, 2, 5, and 10.

Dataset

The dataset is a linear regression task. Given a randomly sampled input \(x\in\mathbb{R}^{100}\), its label is generated by a ground-truth matrix \(W_{\rm gt}\in\mathbb{R}^{100\times 100}\) such that

The loss is the MSE loss between the prediction and the label.

Optimizers

We test both Adam and Muon. Learning rates are swept over

0.0001, 0.0003, 0.001, 0.003, and 0.01.

Results

I started with depth = 1. Despite extensive attempts (including tuning \(W_{\rm gt}\)), I could not find a setting where Muon outperforms Adam. This led me to hypothesize that Muon may work better in harder optimization settings, for example when the network is deeper. Interestingly, the experiments indeed support this hypothesis.

Linear activation function

SiLU activation function

Why does depth matter?

One possible hypothesis is that deeper networks tend to produce loss landscapes that resemble river valleys, while Muon may be particularly effective at navigating such river-valley-like landscapes.

Code

Google Colab notebook available here.

Citation

If you find this article useful, please cite it as:

BibTeX:

@article{liu2026optimization-5,

title={When does Muon work? Model depth is a key factor},

author={Liu, Ziming},

year={2026},

month={March},

url={https://KindXiaoming.github.io/blog/2026/optimization-5/}

}

Enjoy Reading This Article?

Here are some more articles you might like to read next: