MOE 1 -- Experssive power of MOEs through the lens of spectral bias and memorization capacity

Author: Ziming Liu (刘子鸣)

Motivation

Nowadays, MoE (Mixture-of-Experts) architectures are widely adopted in large-scale models. Although, in my understanding, the main motivation for using MoEs is computational efficiency, I am curious whether they also provide advantages in expressive power.

The goal of this blog is to demonstrate potential expressivity advantages using toy synthetic datasets.

Problem setup

Model

Suppose there are \(K\) experts, and each expert is a 2-layer MLP with \(N\) hidden neurons. The output of the MoE is \(\sum_i g_i {\rm MLP}_i(x)\), where the gating weights are given by \(g = {\rm softmax}(W_g x)\). I do not use top-k selection, since the goal of this blog is to understand expressivity rather than efficiency. For a fair comparison, I keep \(N\cdot K\) fixed while varying \(K\).

Dataset 1: Regression

We regress a 2D oscillatory function: \(f(x,y) = {\rm sin}(fx) + {\rm sin}(fy)\) where \(f=7\) and \(x,y\in U[-1,1]\).

Dataset 2: Memorization

The memorization dataset is the same as in the previous blog memory-2.

Results 1: Regression

For each row in the figure, we plot three quantities:

- Training loss curves

- First-layer weights (normalized to lie on a circle). Each point corresponds to the two weights connected to a hidden neuron.

- Voronoi diagram: within each region, one expert is dominant. On the decision boundaries, two (or more) experts have equal gating weights.

I set \(N\cdot K\) to be 60 and find that \(K=10\) achieves the best performance.

The weight visualization is less informative than I initially expected, but the case \(K=2\) appears to exhibit a condensation phenomenon.

Results 2: Memorization

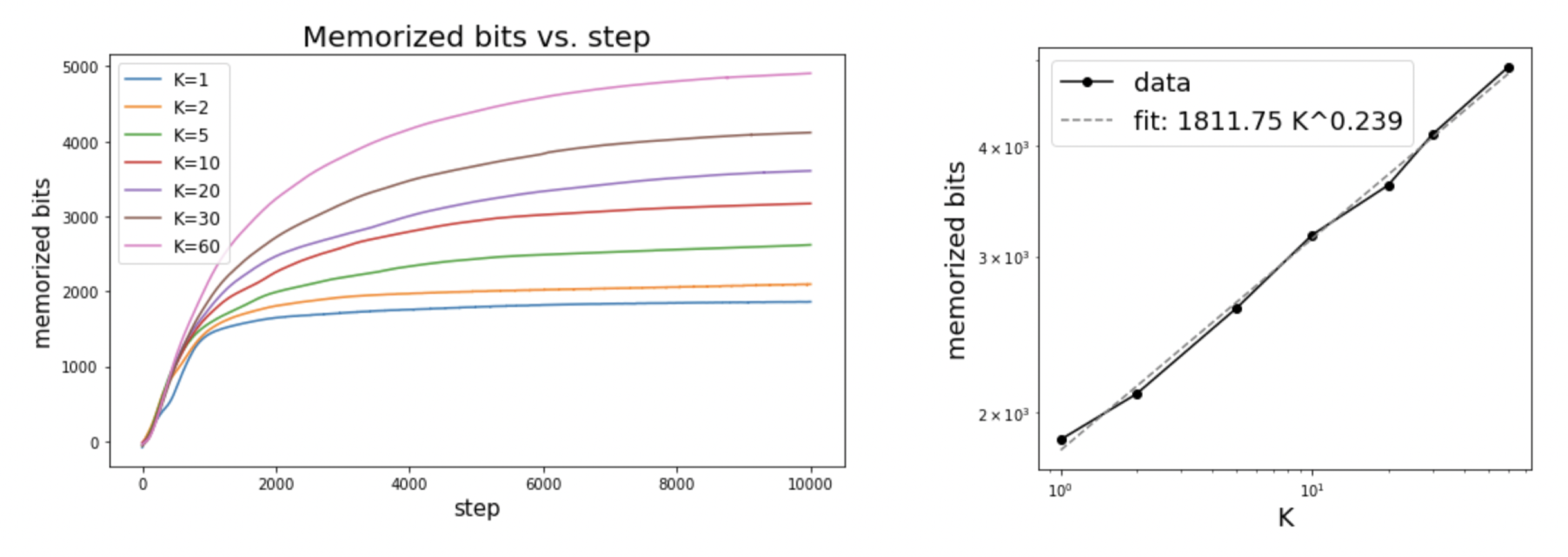

We fix \(N\cdot K = 60\).

For the memorization task, we plot the memorized bits as a function of training steps (left plot) for each value of \(K\). It appears that larger \(K\) consistently memorizes more bits. Moreover, the results seem to follow a scaling law (right plot): \(C \sim K^{0.24}\).

In a sense, the memorization task is effectively learning an arbitrarily high-frequency function – this might be the reason why larger \(K\) is always better. When the target function is not arbitrarily high-frequency (as in the regression case), \(K\) has a sweet spot in the middle.

Code

Google Colab notebook available here.

Citation

If you find this article useful, please cite it as:

BibTeX:

@article{liu2026moe-1,

title={MOE 1 -- Experssive power of MOEs through the lens of spectral bias and memorization capacity},

author={Liu, Ziming},

year={2026},

month={March},

url={https://KindXiaoming.github.io/blog/2026/moe-1/}

}

Enjoy Reading This Article?

Here are some more articles you might like to read next: