Memory 2 -- How many bits does each parameter store? An analysis of MLP

Author: Ziming Liu (刘子鸣)

Motivation

Various works have shown that memorization capacity (measured in bits) scales proportionally with the number of parameters when evaluated on randomly generated datasets. For exampke, Jack Morris et al. report 3.6 bits per parameter for transformers. Since previous studies show that MLPs are most responsible for memorization, we are motivated to study the memorization capacity of MLPs themselves.

Problem setup

The dataset is the same as in my previous blog memory-1, except that we now replace the linear layer with an MLP.

- Dataset: the input is randomly generated from a Gaussian distribution (input dimension \(D\)), and the output is a random label drawn from a vocabulary of size \(V\). There are \(N\) data points in total.

- Model: to imitate language models, we use a two-layer MLP with shape \((D, W, D)\) (\(D\) input dimension, \(W\) hidden neurons, \(D\) output neurons), followed by an lm head which is a linear layer of shape \(D\times V\). All biases are disabled (I do not expect this to matter much). We also experiment with 3-layer MLPs with shape \((D, W, W, D)\), also followed by an lm head.

We train the models using cross-entropy loss with the Adam optimizer.

We compute the memorized bits as

\[C=(\mathrm{log}(V)-\ell_{\rm final})\frac{N}{\mathrm{log}(2)}\]where \(\ell_{\rm final}\) is the final loss (averaged over data samples, which is why there is an additional multiplicative factor \(N\)).

Results 1: for 2L MLP, 2.4 bits/parameter

We fix \(D=10\) and \(V=10\), and vary \(W\) (width) and \(N\) (the number of data points).

The curves do not simply rise and plateau, due to the jamming phenomenon found in memory-1. Reducing learning rates would make a better “rise and plateau” plot, but I’m a bit lazy here.

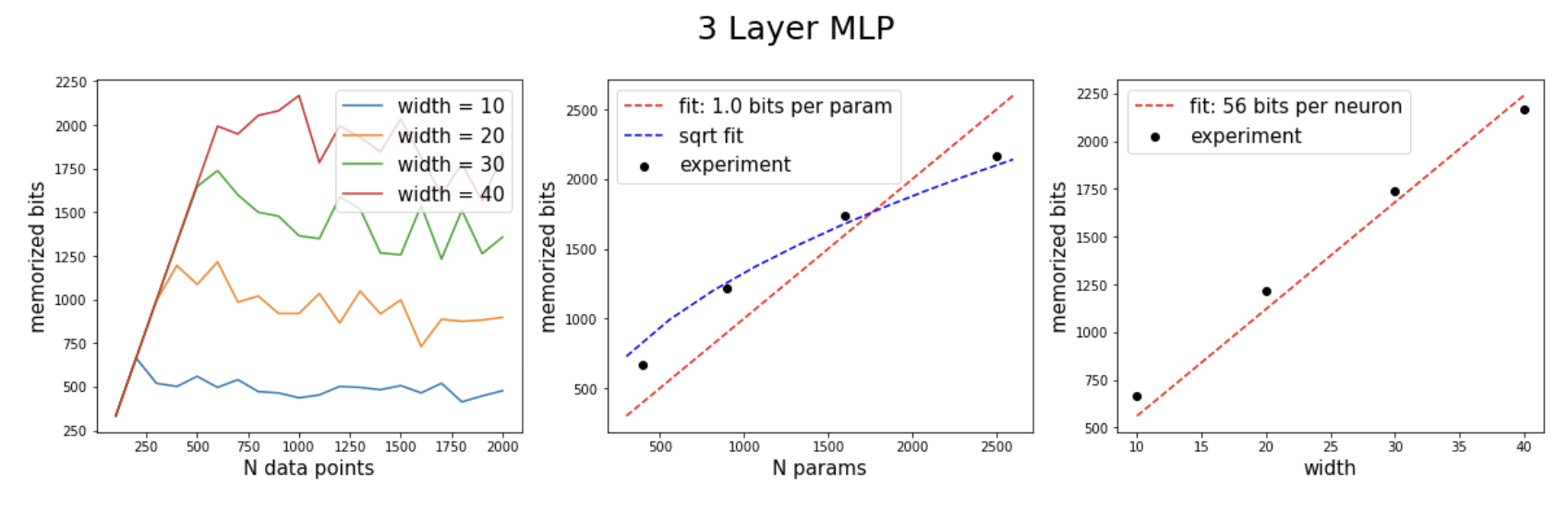

Results 2: for 3L MLP, memorized bits scale linearly with width, but not with \(N_{\rm params}\) (when only hidden dimension is scaled)

For the data, we fix \(V=10\) and vary \(N\).

For the model, we keep the input dimension \(D=10\) fixed and only vary the width \(W\).

I speculate that if we scale both width and input dimension together, we might recover the relation \(C\sim N_{\rm params}\).

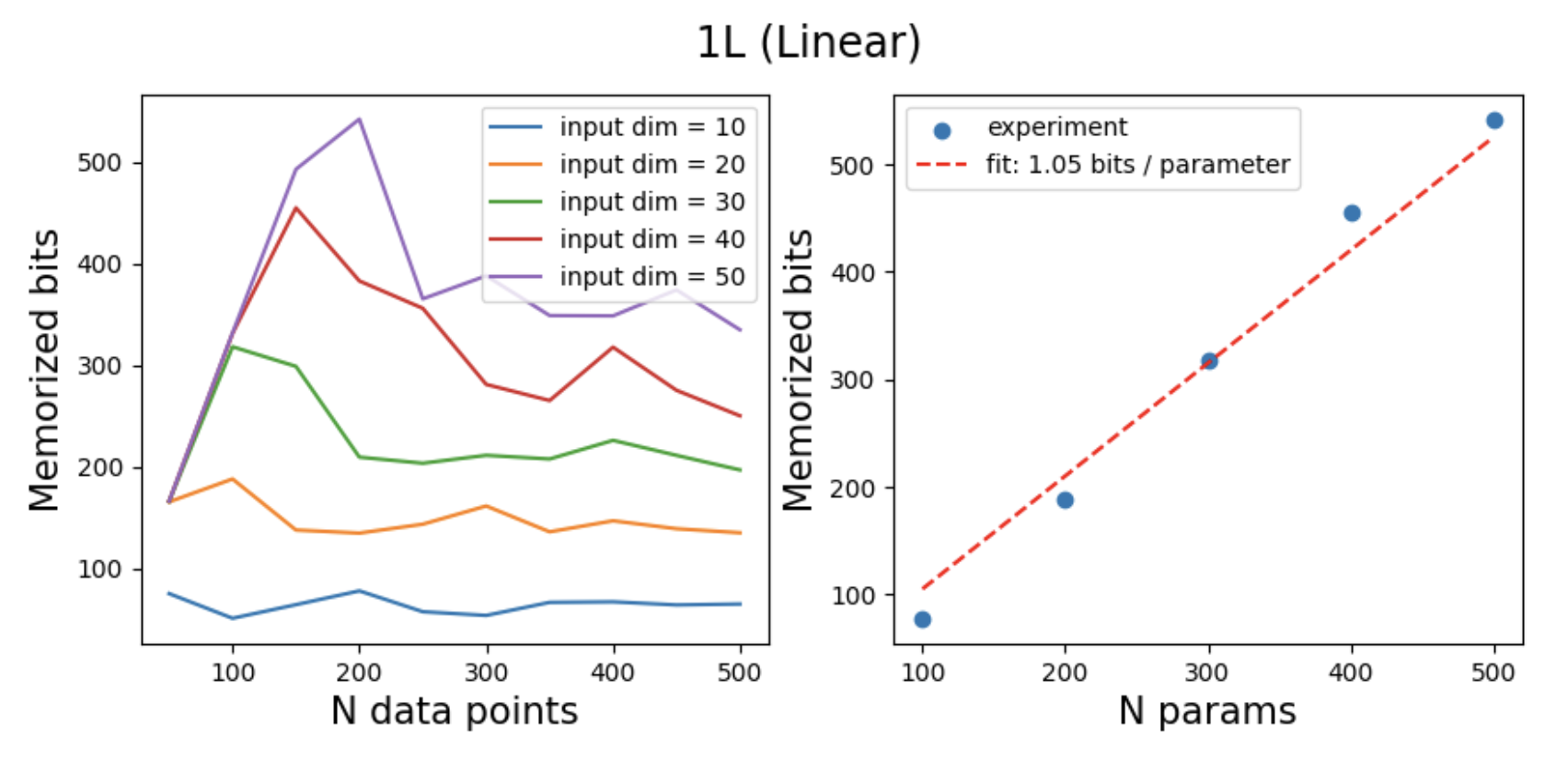

Results 3: for 1L linear model, 1 bit/parameter

The capacity of 1 bit per parameter is smaller than 2.4 bits per parameter. This suggests that if we want to understand how empirical measurements (2, 2.4, or 3.6 bits) arise, a linear model is not a good toy model. A 2-layer MLP appears to be the minimal model we need to study.

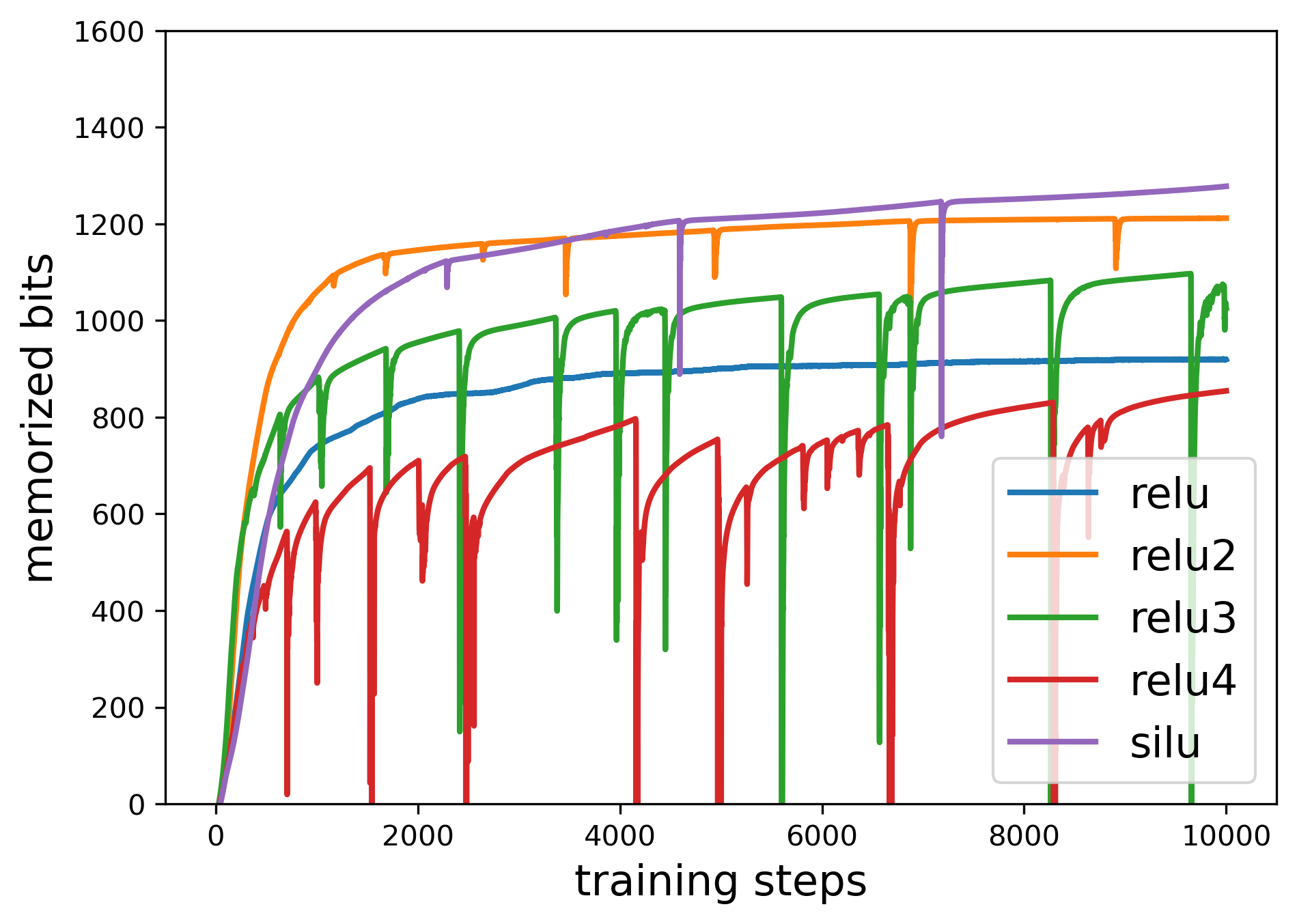

Results 4: activation functions can change memory capacity

Modern Hopfield network theory highlights that the functional form of interactions plays a key role, and this is closely related to activation functions. It is therefore interesting to examine how activation functions affect memorization capacity.

We find that common choices such as ReLU2 and SiLU outperform ReLU, in the sense that they achieve higher memorization capacity. And although ReLU3 and ReLU4 in theory outperform ReLU2 (because they are high-order polynomials), they face training instability issues.

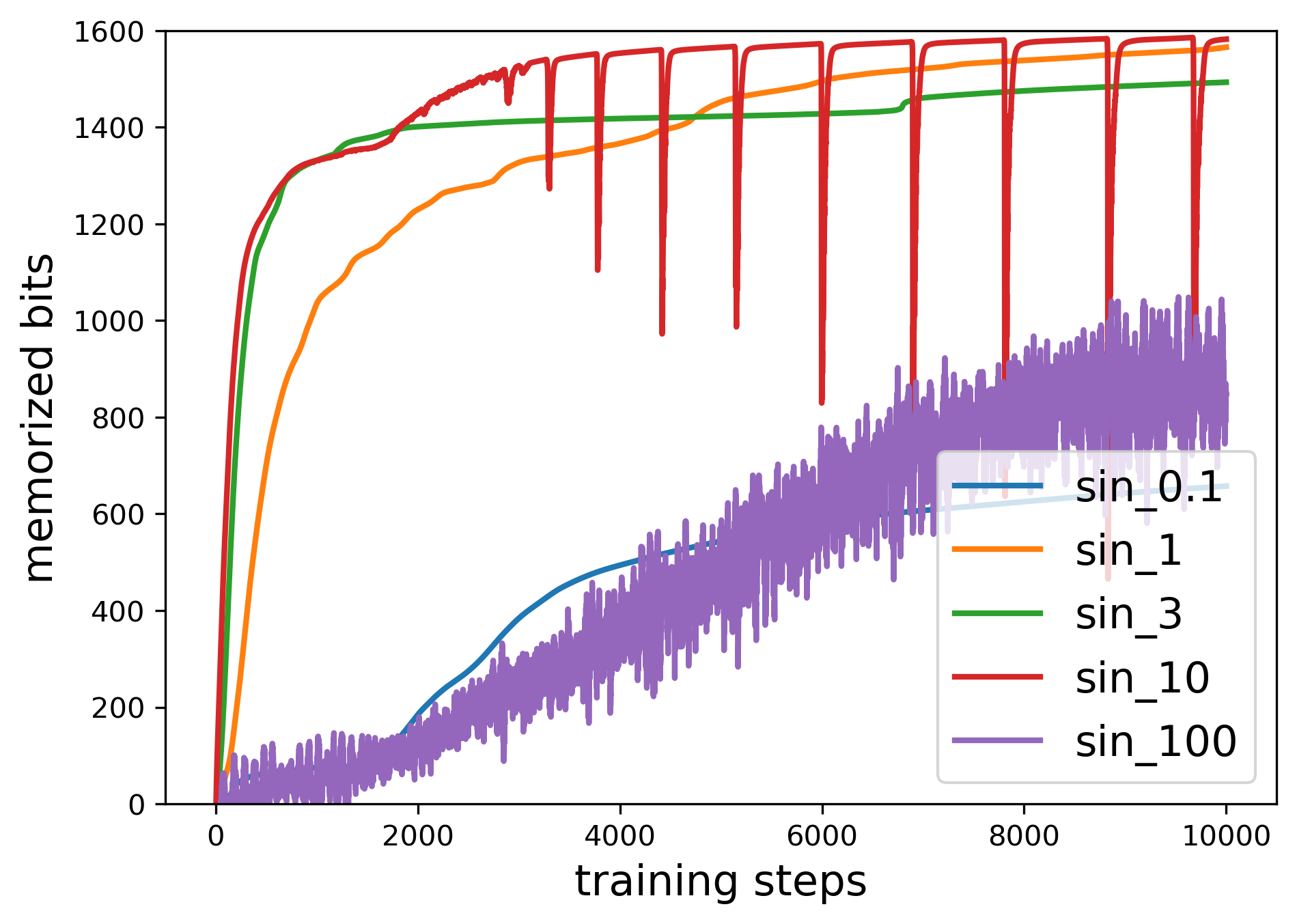

We also experiment with periodic activation functions \({\rm sin}(fx)\). When \(f\) is small, the function behaves approximately linearly, corresponding to low capacity. When \(f\) is large (similar to random Fourier features), the function becomes highly expressive, corresponding to high capacity.

However, \(f\) cannot be too large, otherwise training becomes unstable. This reveals a memorization–stability tradeoff: when a network becomes more expressive (and thus better at memorization), training tends to become less stable.

By the way, the high-frequency problem might be the reason why KAN-based transformers haven’t achieved SOTA yet – KANs’ expressive power could hinder training stability (and representation learning). Could there be free lunch – stability and capacity at the same time?

If memorization is indeed an important aspect of LLMs, then one possible strategy for designing new activation functions is to test them on toy models, measure their memorization capacity, and prioritize those with the highest capacity as promising candidates.

Comment: How does this connect to LLMs?

Although I have not yet conducted experiments on full LLMs, I would like to propose a few hypotheses.

I speculate that:

- The linear relation with \(N_{\rm params}\) arises from the use of 2-layer MLPs.

- One might ask: transformers contain many blocks along depth (much more than two layers). My explanation is that

(1) residual connections make these blocks effectively parallel (at least for memorization; see the next point), so increasing depth effectively increases width.

(2) A 2-layer MLP naturally represents key–value pairs, meaning a shallow structure is inherently suitable for memorization.

If we were to use 3-layer MLPs inside transformers:

-

If we only scale the width \(W\) but keep the embedding dimension \(D\) fixed (which corresponds to the input dimension in our toy model), we would obtain

\(C\sim W\)

while

\(N_{\rm params}\sim W^2\)

because the middle weight matrix dominates the parameter count. Thus

\(C\sim \sqrt{N_{\rm params}}\). -

If we scale both the width \(W\) and the embedding dimension \(D\) together, then the input contributes a factor of \(W\) (as suggested by the 1D linear results), and the width contributes another factor of \(W\). This leads to

\(C\sim W^2\)

and

\(N_{\rm params}\sim W^2\).

Therefore

\(C\sim N_{\rm params}\).

Some questions remain unexplained:

- Why 2.4 bits? Now that we have a simple toy model (2-layer MLP), the next step may involve mechanistic interpretability or statistical analysis.

- How should we choose activation functions? One possible approach is to search for activation functions that yield high memorization capacity on toy models, and then test them in real LLMs.

Code

Google Colab notebook available here.

Citation

If you find this article useful, please cite it as:

BibTeX:

@article{liu2026memory-2,

title={Memory 2 -- How many bits does each parameter store? An analysis of MLP},

author={Liu, Ziming},

year={2026},

month={March},

url={https://KindXiaoming.github.io/blog/2026/memory-2/}

}

Enjoy Reading This Article?

Here are some more articles you might like to read next: