A Toy Model of Masked-Autoencoder (MAE)

Author: Ziming Liu (刘子鸣)

Motivation

This blog proposes a toy model of Masked-Autoencoder (MAE). We adopt a ShapeWorld synthetic dataset, where each image contains a single object (circle or square) at a random position.

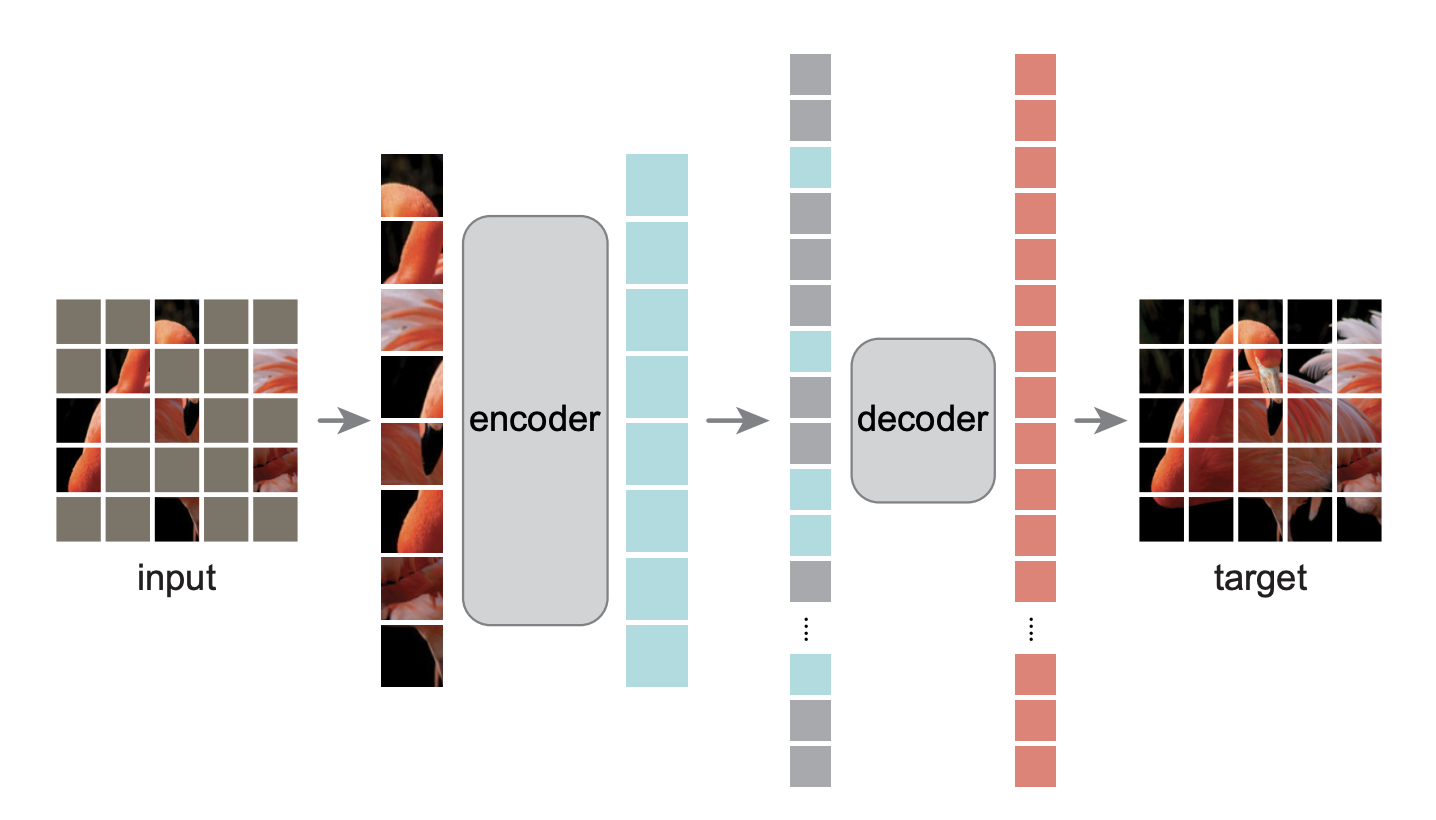

The goal of Masked-Autoencoder (MAE) is to reconstruct the full image from masked or corrupted inputs. The architecture of MAE is shown below (Figure 1 in the MAE paper).

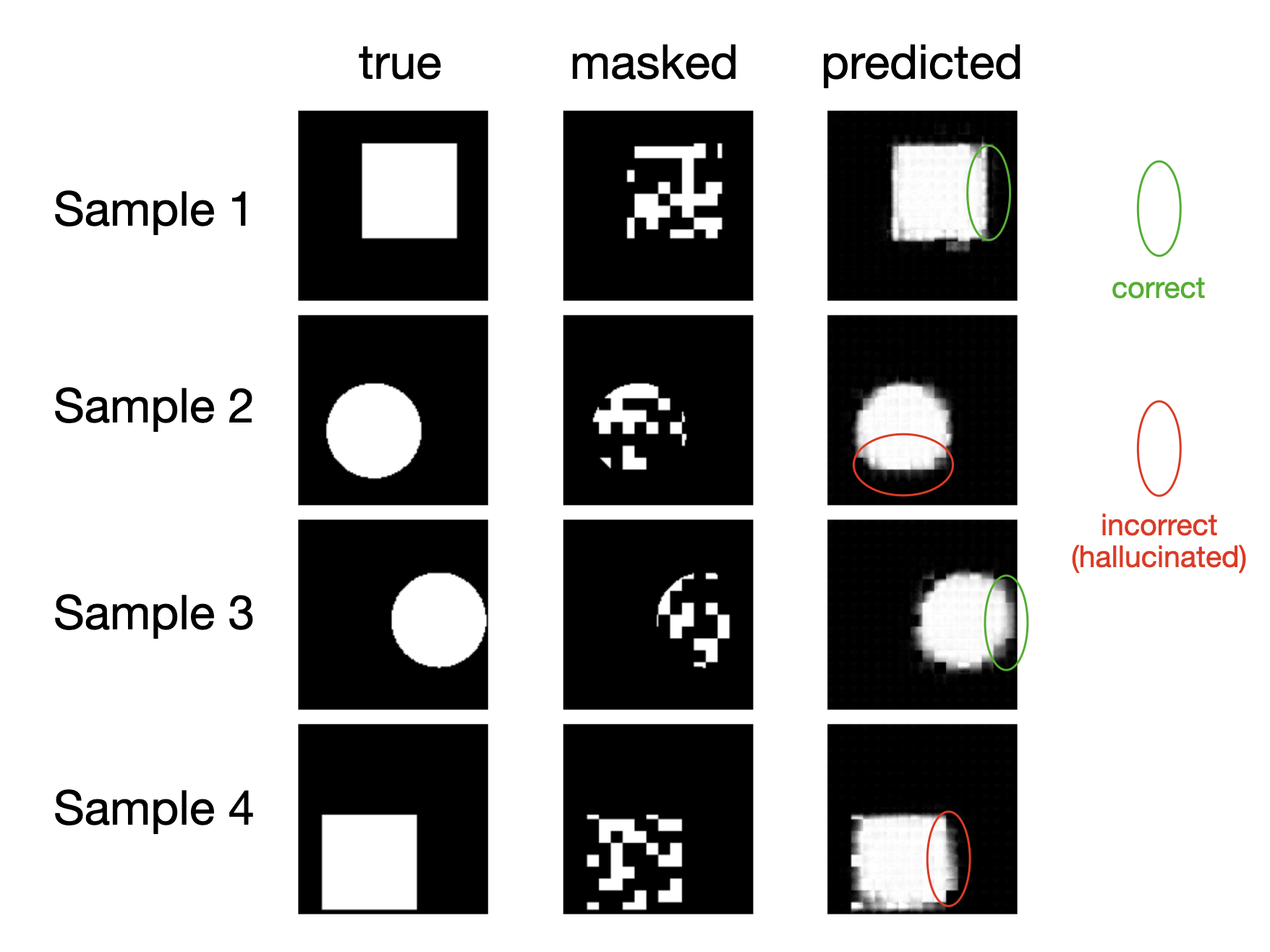

Observation 1: Hallucination

By inspecting examples, we find that MAE can suffer from local hallucinations. (One may argue this is also a source of generalization or creativity, in the sense of Kamb and Ganguli.) For instance, a square may be locally hallucinated as a circle, or vice versa, even though a human can easily infer the correct shape. Note that both the encoder and decoder are vision transformers, so in principle they should be capable of modeling global structure effectively.

Observation 2: Representation Learning

A key argument behind MAE is that masked reconstruction is a challenging task, which incentivizes the encoder to learn stronger representations. The decoder is intentionally designed to be weaker than the encoder, encouraging the encoder’s output (the representation) to be sufficiently informative such that even a weak decoder can reconstruct pixel-level details.

To evaluate representation quality, we train a linear classifier to predict the shape (circle or square) from the learned representation. Classification accuracy is used as the metric.

Masked Ratio

Consistent with the original MAE paper, the masked ratio exhibits a sweet spot at intermediate values.

| masked ratio | 0.1 | 0.5 | 0.6 | 0.7 | 0.8 | 0.9 |

|---|---|---|---|---|---|---|

| classification acc | 0.978 | 0.980 | 0.986 | 0.924 | 0.851 | 0.767 |

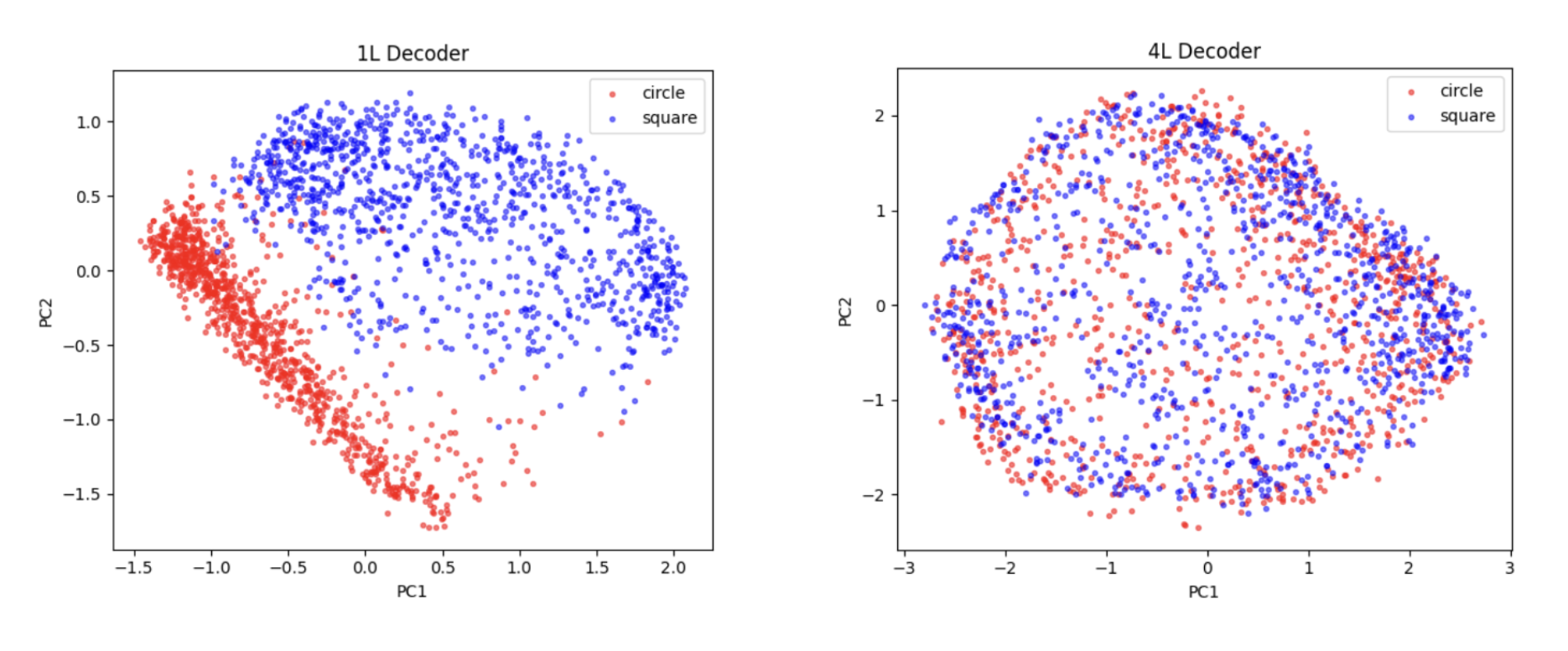

Decoder Depth

We fix the encoder depth at 4 and the masked ratio at 0.6, and vary the decoder depth. As expected, stronger decoders lead to weaker representations.

| decoder depth | 1 | 2 | 3 | 4 |

|---|---|---|---|---|

| classification acc | 0.991 | 0.986 | 0.898 | 0.829 |

If we project the representations onto the plane spanned by the first two principal components (PCs), we clearly observe linear separability with a 1-layer decoder, but not with a 4-layer decoder.

Code

Google Colab notebook available here.

Citation

If you find this article useful, please cite it as:

BibTeX:

@article{liu2026mae,

title={A Toy Model of Masked-Autoencoder (MAE)},

author={Liu, Ziming},

year={2026},

month={April},

url={https://KindXiaoming.github.io/blog/2026/mae/}

}

Enjoy Reading This Article?

Here are some more articles you might like to read next: