Attention residual 2

Author: Ziming Liu (刘子鸣)

Motivation

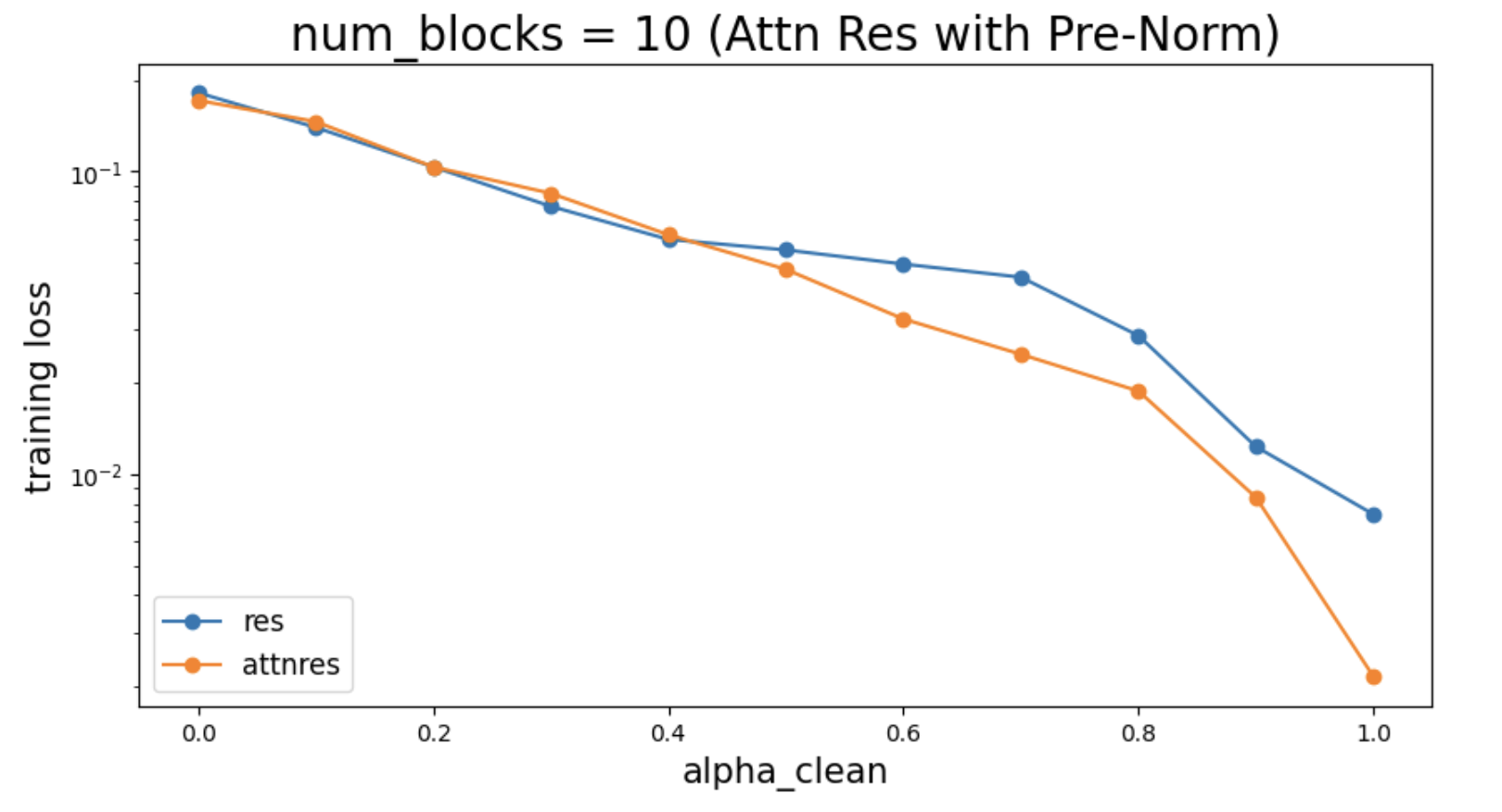

In a previous blog, I aimed to study when Attention Residual works. Thanks to discussions with Jiachen You and Jianlin Su, I realized that I had not included Pre-Norm in the attention residual formulation. After fixing this, the phase transition disappears, and Attention Residual appears to consistently outperform standard residual across the spectrum of toy datasets I considered.

However, I still strongly believe in the No-Free-Lunch principle — there must be some trade-off. This leads to some deeper thinking.

Problem setup

Teacher-student setup

Now that both standard residual and attention residual use Pre-Norm, and the uniform bias at initialization is essentially the same for both, the key argument for attention residual is that it may learn to break this uniform bias during training.

To test whether attention residual indeed benefits from breaking this uniform bias, I construct a teacher–student setup. The teacher network is a randomly initialized Attention Residual network, but the attention matrices are treated as fixed (randomly generated). A temperature parameter controls how uniform the “true” attention is: when the temperature is high, the attention is uniform; when the temperature is low, the attention is peaked (close to one-hot). A student network is then trained to fit the dataset generated by the teacher.

Ablation study

There are two main differences between standard residual and Attention Residual:

- Difference 1: the scale (norm) of hidden states accumulates in standard residual networks, whereas the scale remains relatively stable in attention residual due to normalization of attention weights. This subtle difference also means that using the same learning rate is not an apples-to-apples comparison (so we must sweep learning rates).

- Difference 2: the skip connections in attention residual are learnable.

In the spirit of isolating variables, I introduce a third model: “rescaled residual”, where hidden states are rescaled to keep their norms roughly constant:

\[h_{l+1}=\frac{l}{l+1}h_l + \frac{1}{l+1}f_l(x_l)\]This largely eliminates Difference 1, leaving Difference 2 (learnability) as the primary distinction. Comparing “rescaled residual” to “attention residual” is therefore cleaner, since they have similar hidden-state scales and differ mainly in whether the attention matrix is learnable.

Results

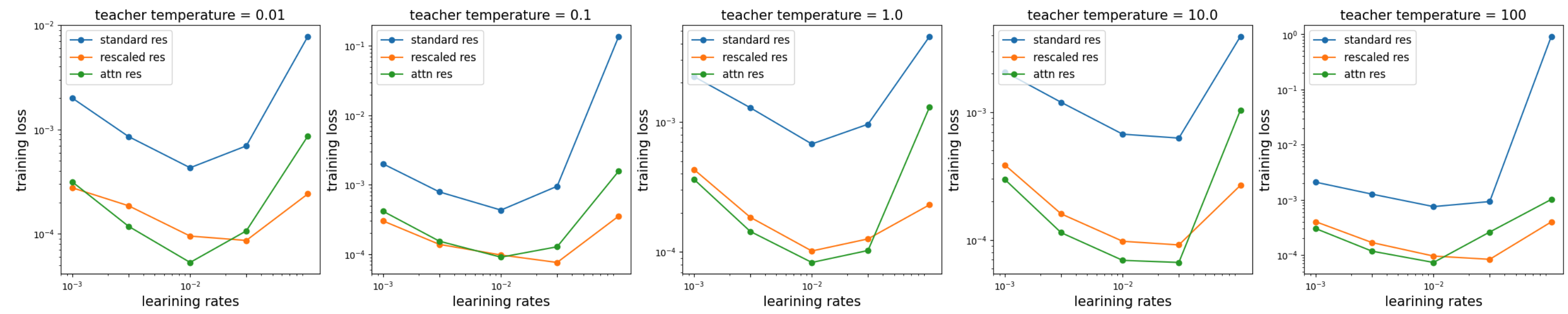

We sweep temperature \(T=\{0.01, 0.1, 1.0, 10.0, 100.0\}\) and learning rates \(\eta=\{0.001, 0.003, 0.01, 0.03, 0.1\}\), and compare three models: standard residual, rescaled residual, and attention residual.

A few observations:

- Standard residual performs poorly, while rescaled residual is competitive with attention residual. Kimi’s results would be more convincing if they also evaluated a rescaled residual baseline (I suspect similar ideas have been proposed before). Of course, I am not claiming that these toy experiments fully capture large-scale settings, but this does raise the question of whether the observed gains truly come from learnable attention, or simply from rescaling effects (one could even imagine treating the attention matrix as a fixed leaf tensor, i.e., constant with respect to hidden states. There are many ways to simplify their setup.).

- I expected attention residual to show clear advantages when the temperature is low (i.e., when teacher attention is non-uniform). However, the results are not strong enough to clearly support or refute this hypothesis.

- Rescaled residual appears to be more stable than attention residual at high learning rates. This is expected — introducing gating or control mechanisms (attention residual can be viewed as a form of gating or control) can facilitate learning in other components, but only if the gating itself is well learned. This requires sufficient stability and/or feature learning, which is non-trivial.

Code

Google Colab notebook available here.

Citation

If you find this article useful, please cite it as:

BibTeX:

@article{liu2026AttnRes2,

title={Attention Residual 2},

author={Liu, Ziming},

year={2026},

month={March},

url={https://KindXiaoming.github.io/blog/2026/attention-residual-2/}

}

Enjoy Reading This Article?

Here are some more articles you might like to read next: