Machine Learning in High Energy Physics

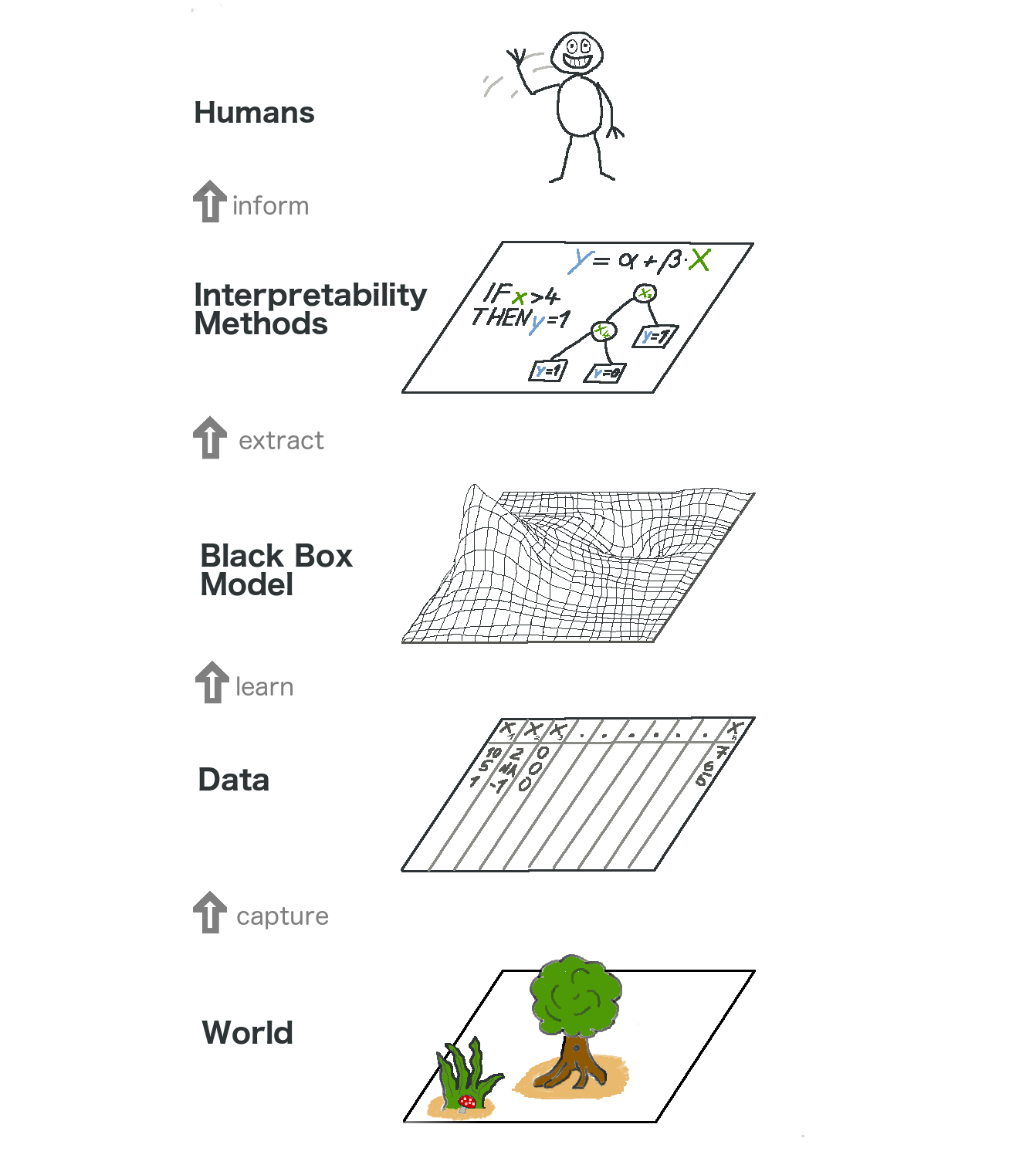

For reviews on Machine Learning in High Energy Physics, you can refer to my article on ZhiHu. In my opinion, Machine Learning(ML) is an expert in phenomenology. We feed the data to the algorithm, and it extracts features out of the data. For supervised machine learning, it also uses the features to make predictions. Why the combination of ML and HEP is a good idea? For people working in the High Energy Physics (HEP) community, they focus on

- Theory (e.g, String Theory)

- Phenomenology (e.g, Jet/QGP)

- Calculations (e.g, Lattice QCD)

- First, we have to admit that ML is a useless technique for theorists who focus on the most theoretical aspect of HEP. This is because we can never translate the (numerical) parameters in models to a clear (analytical) picture, except that we know the picture in advance.

- Second, for physicists working with Phenomenologies in HEP, ML is a good tool. Because ML is phenomenology itself, it can help design observables.

- Third, for Lattice QCD physicists, ML can help speed up calculations, because with Machine Learning, one can implement the updates more efficiently. Reinforcement learning is especially good at automatically searching for optimal parameters that outperform the handcrafted ones.

Our work (Highlight: Innovative work!)

My research experiences fall to the second category, focusing on the phenomenology of Quark-gluon Plasms (QGP) . We provide a data-driven machine learning method (Principal Component Analysis) to discover new flow observables, which are better indicators for initial fluctuations than traditional flow harmonics.

This work has been presented by me on 4th China LHC Physics Conference (Oral). If you are interested, click me to get the slides.

The paper is in preparation and will be soon submitted.

Title: Principal Component Analysis of Collective Flow in Relativistic Heavy-Ion Collisions

Collaborators: HuiChao Song,

Wenbin Zhao