About Me

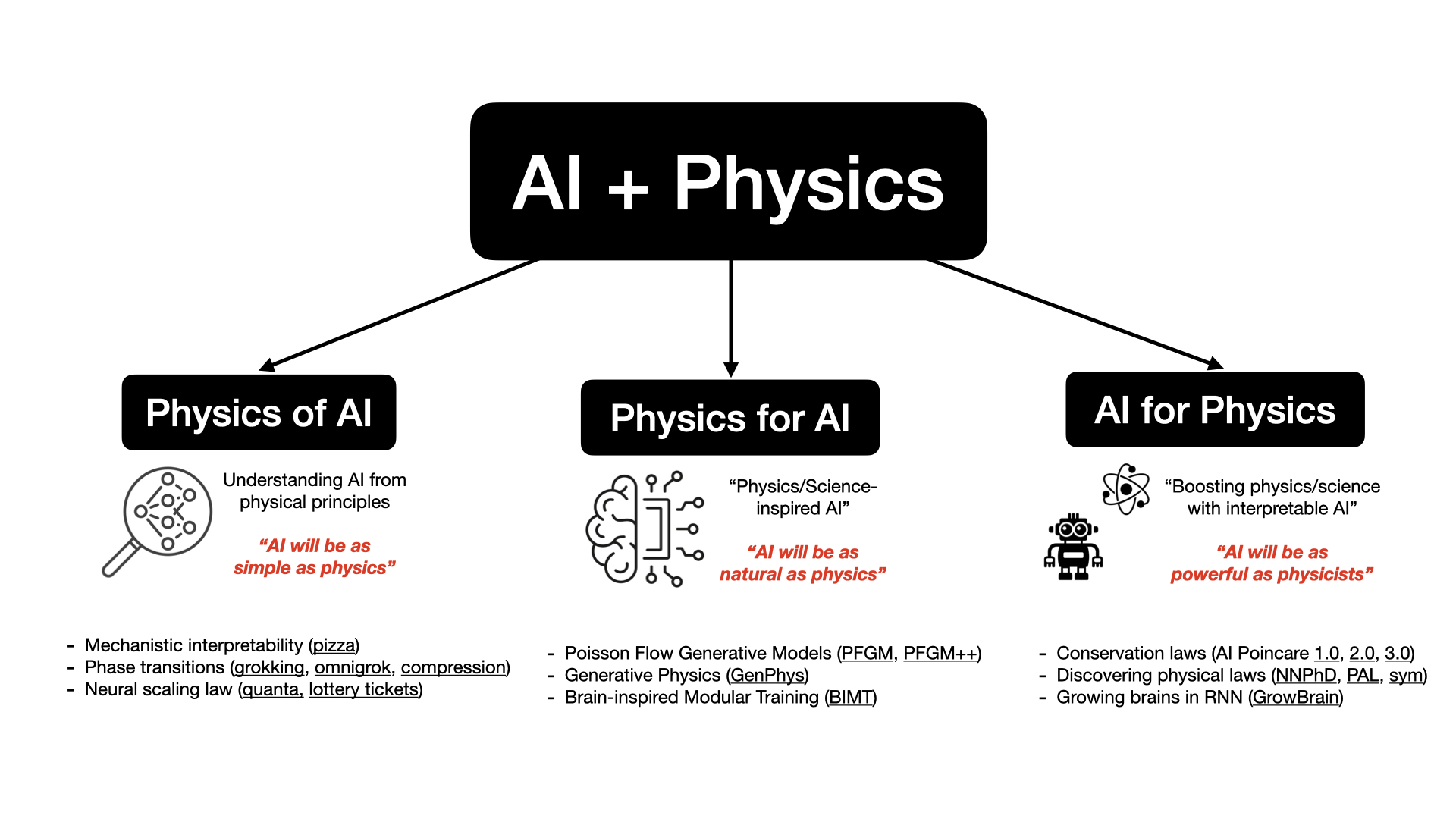

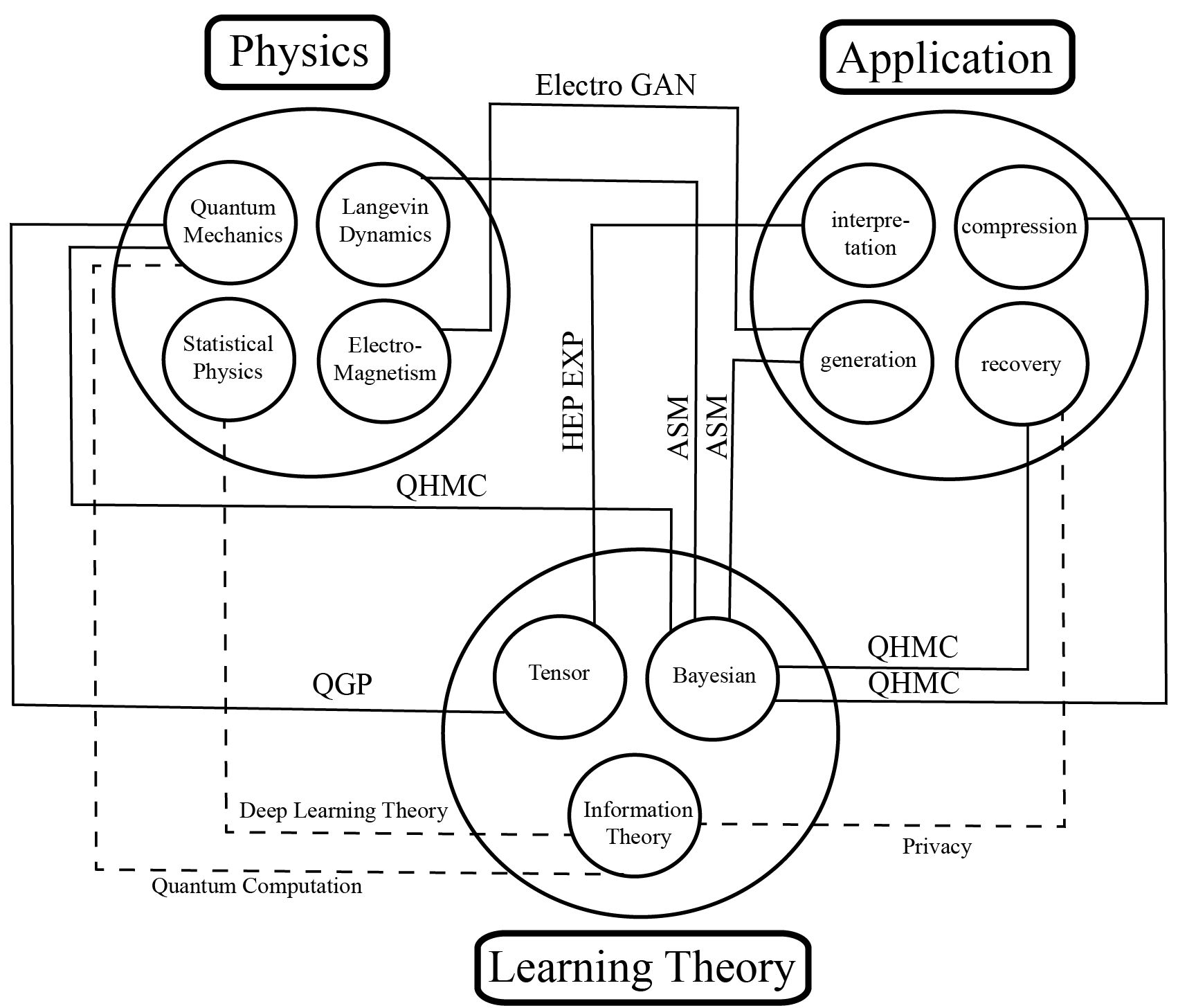

I am a physicist and a machine learning researcher. I am currently a third-year PhD student at MIT and IAIFI, advised by Max Tegmark. My research interests lie generally in the intersection of artificial intelligence (AI) and physics (science in general):

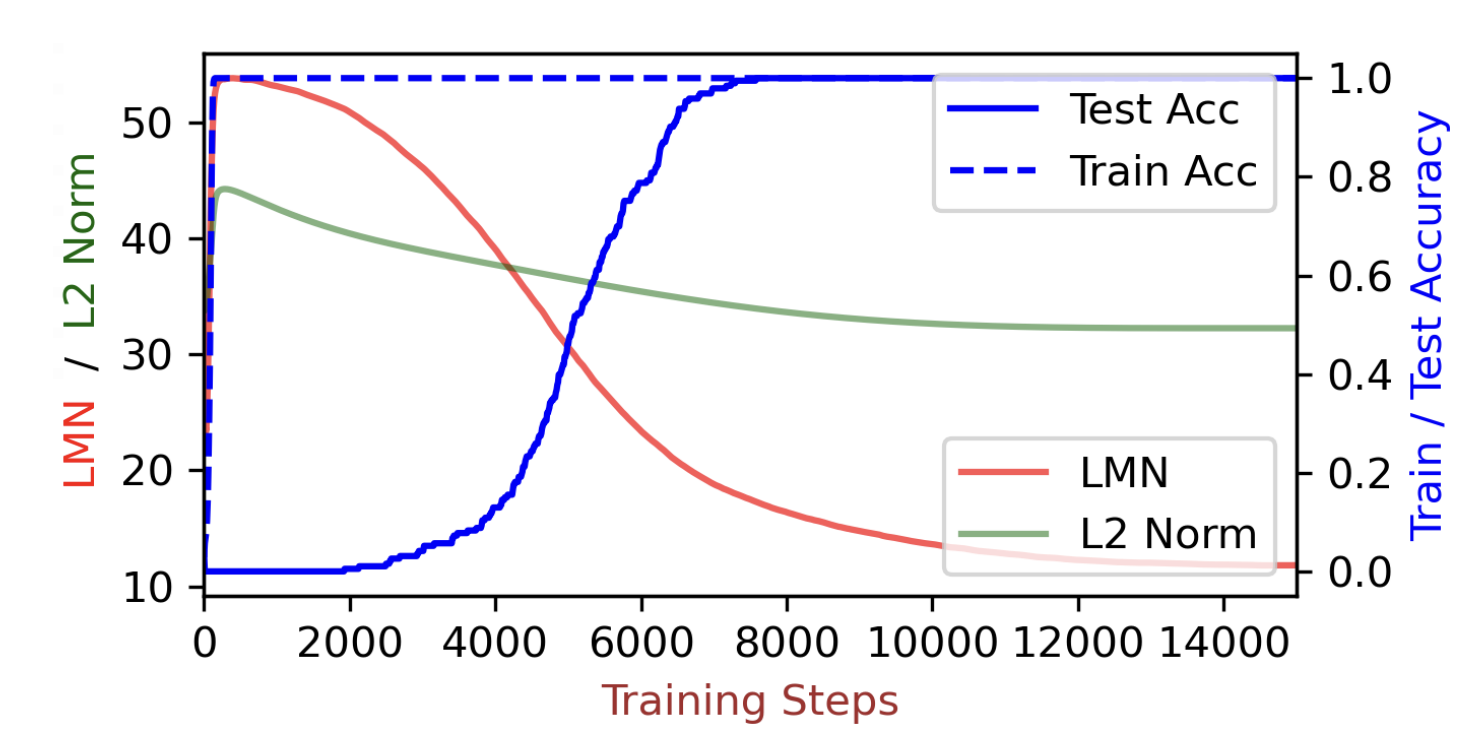

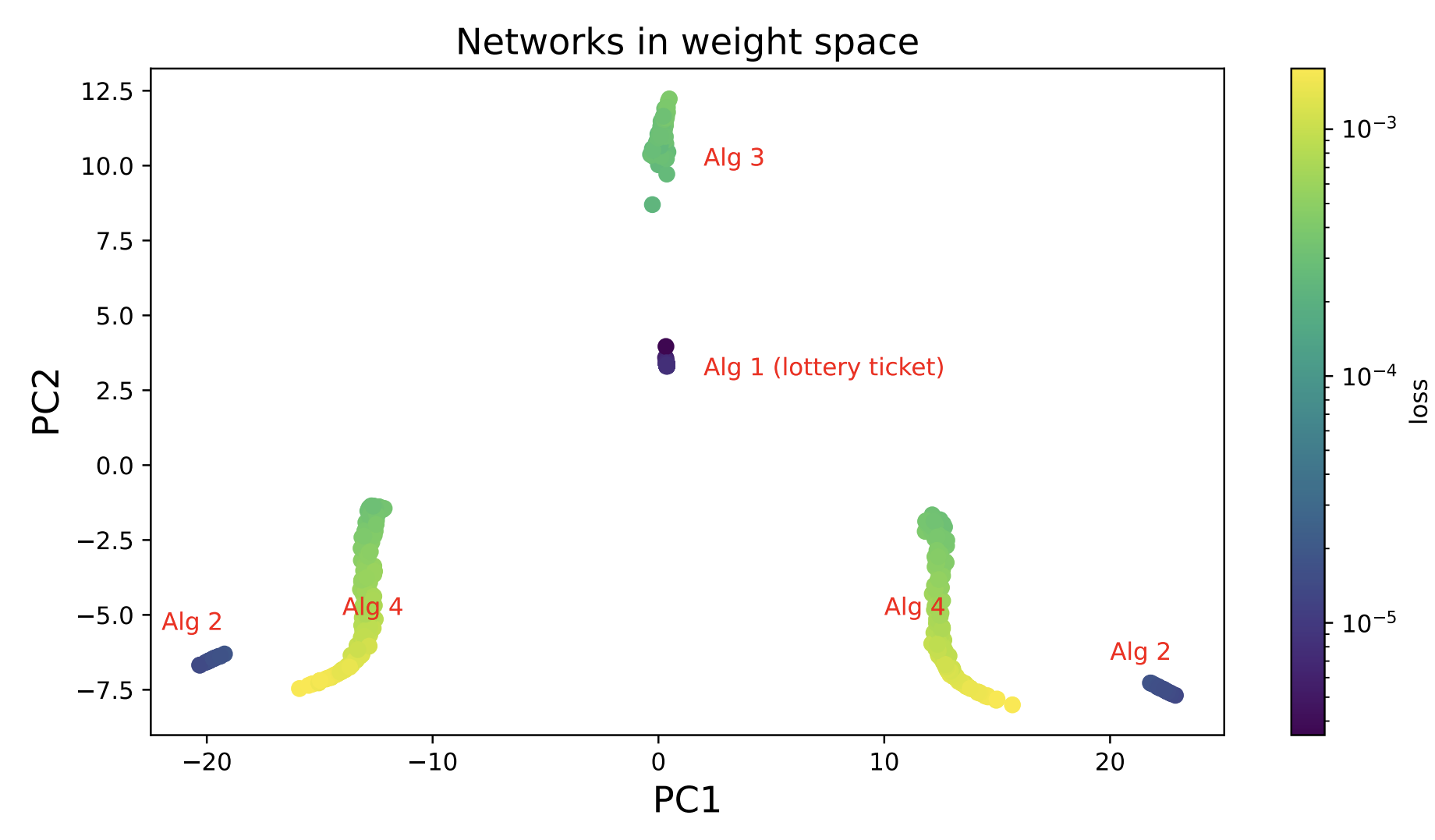

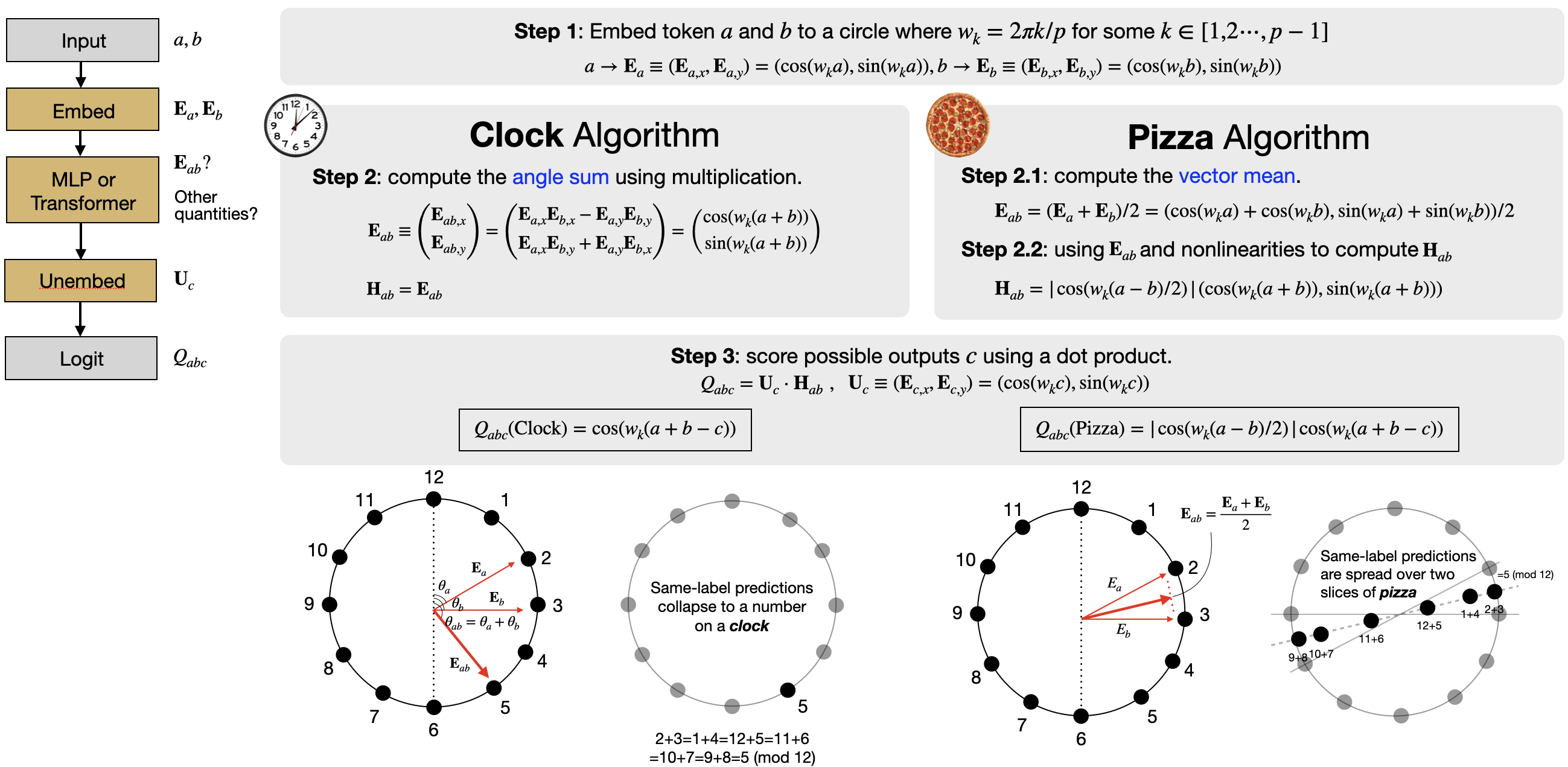

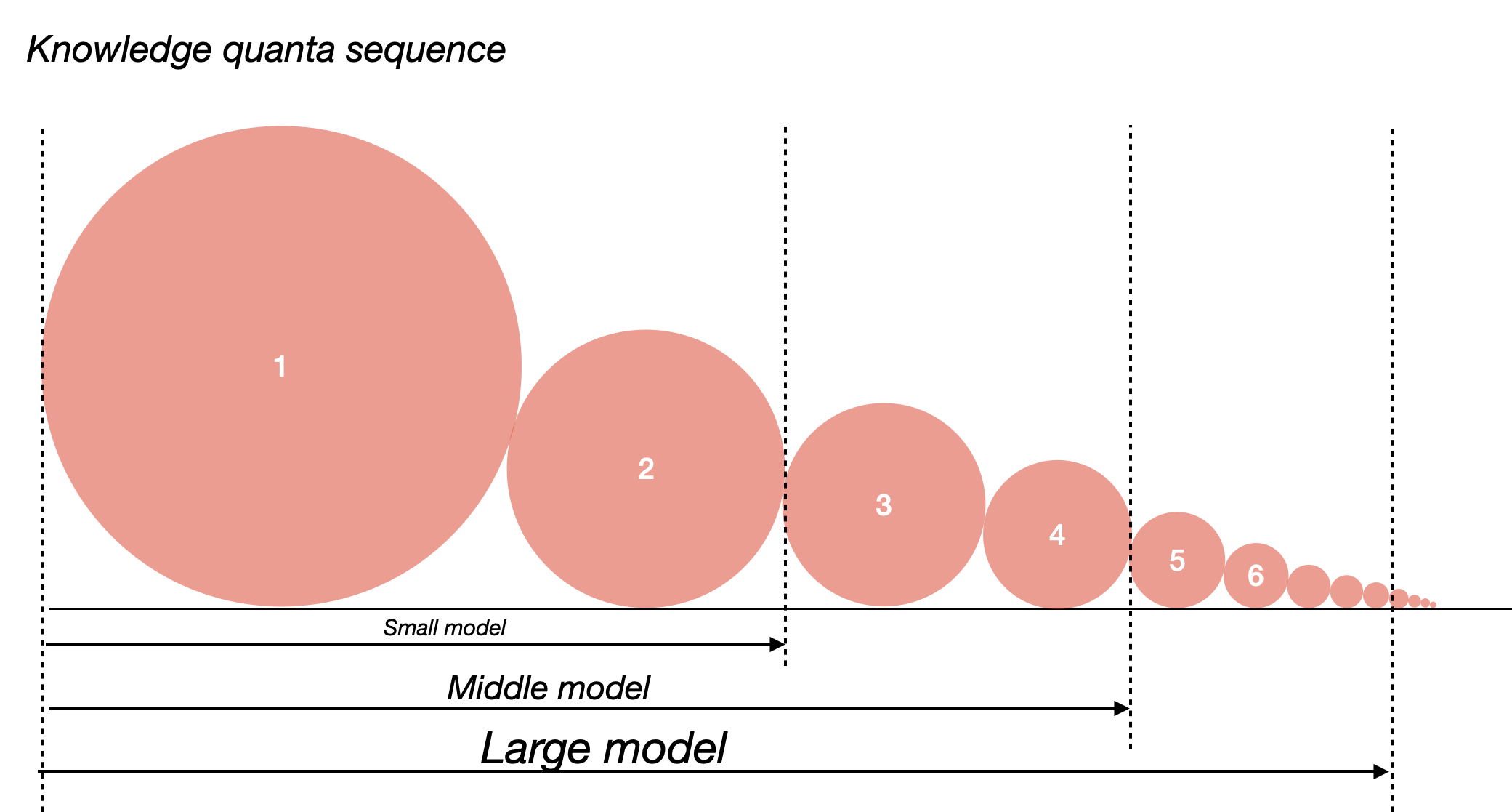

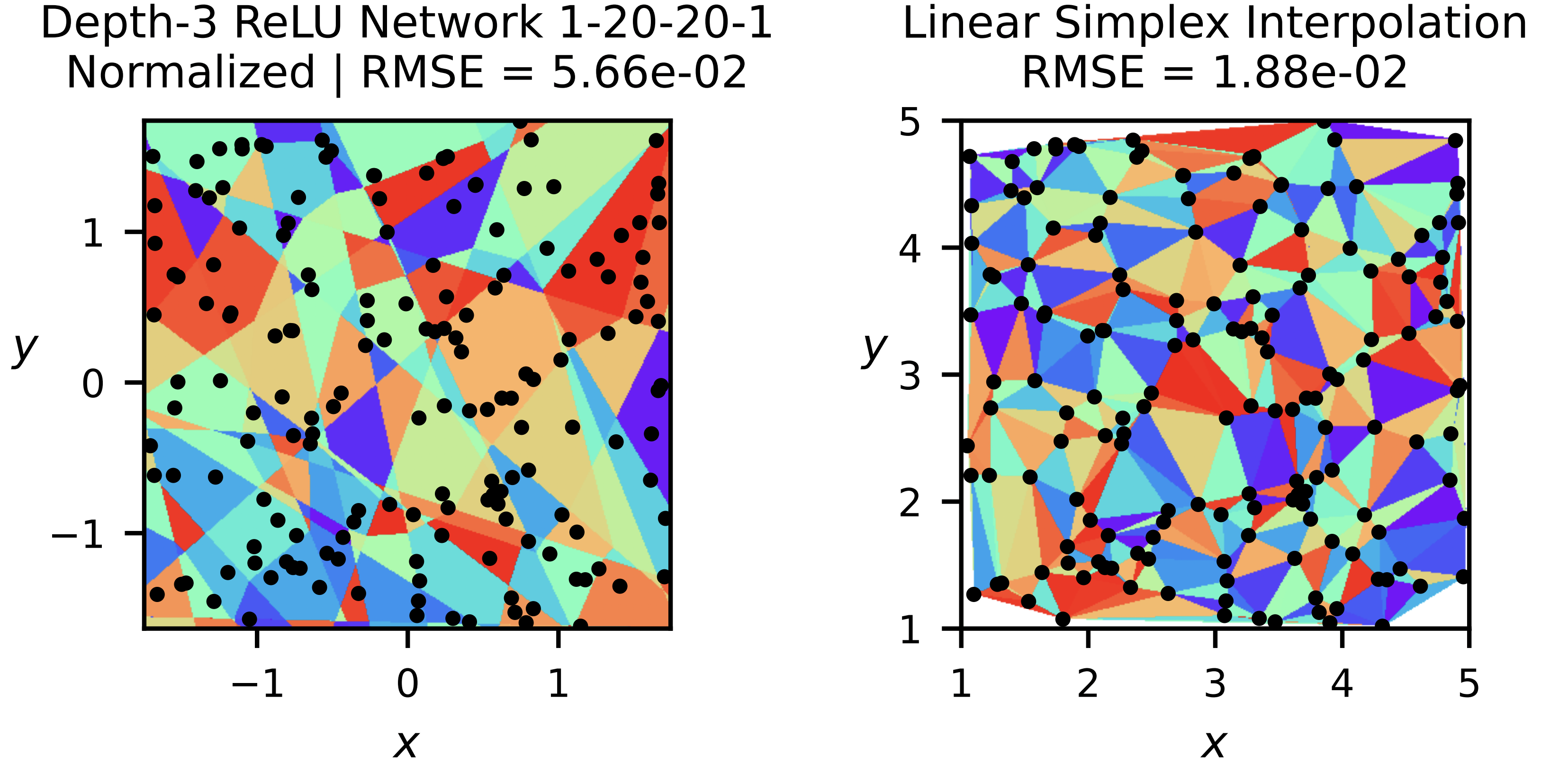

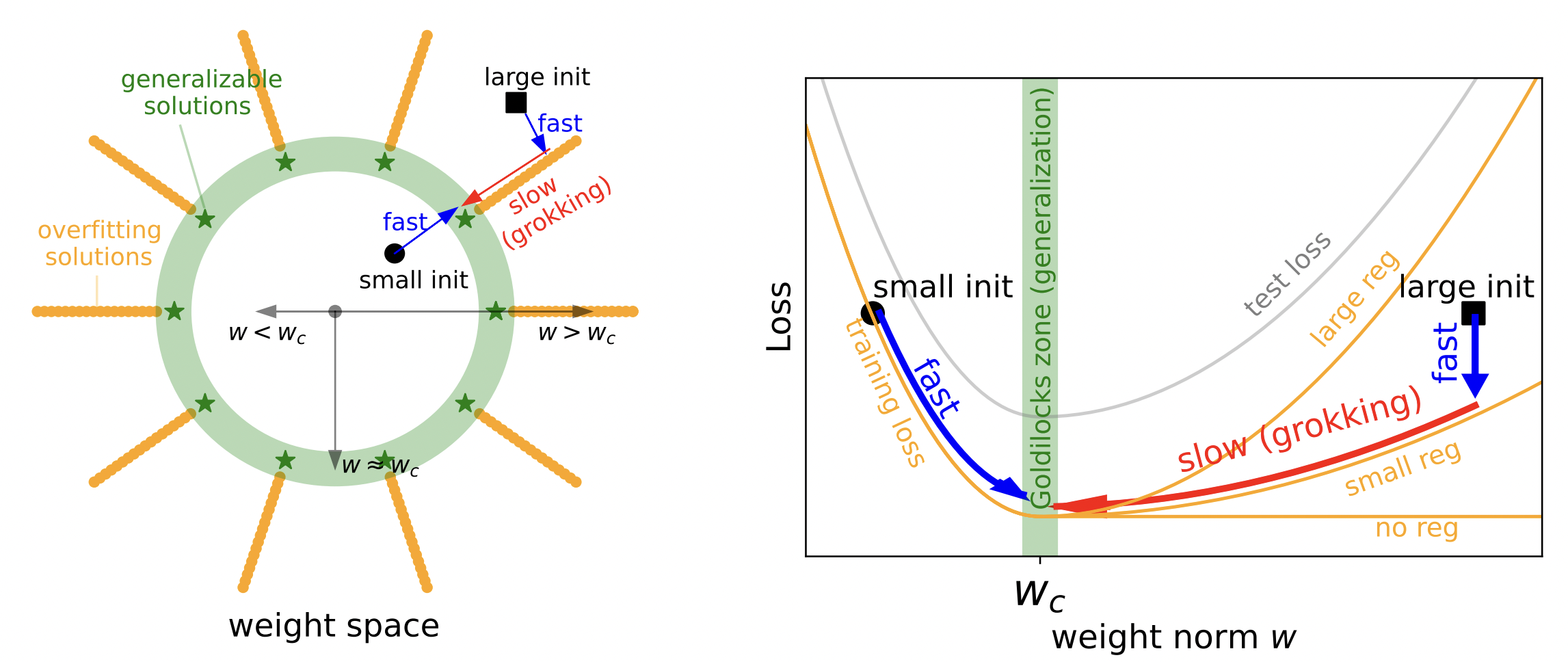

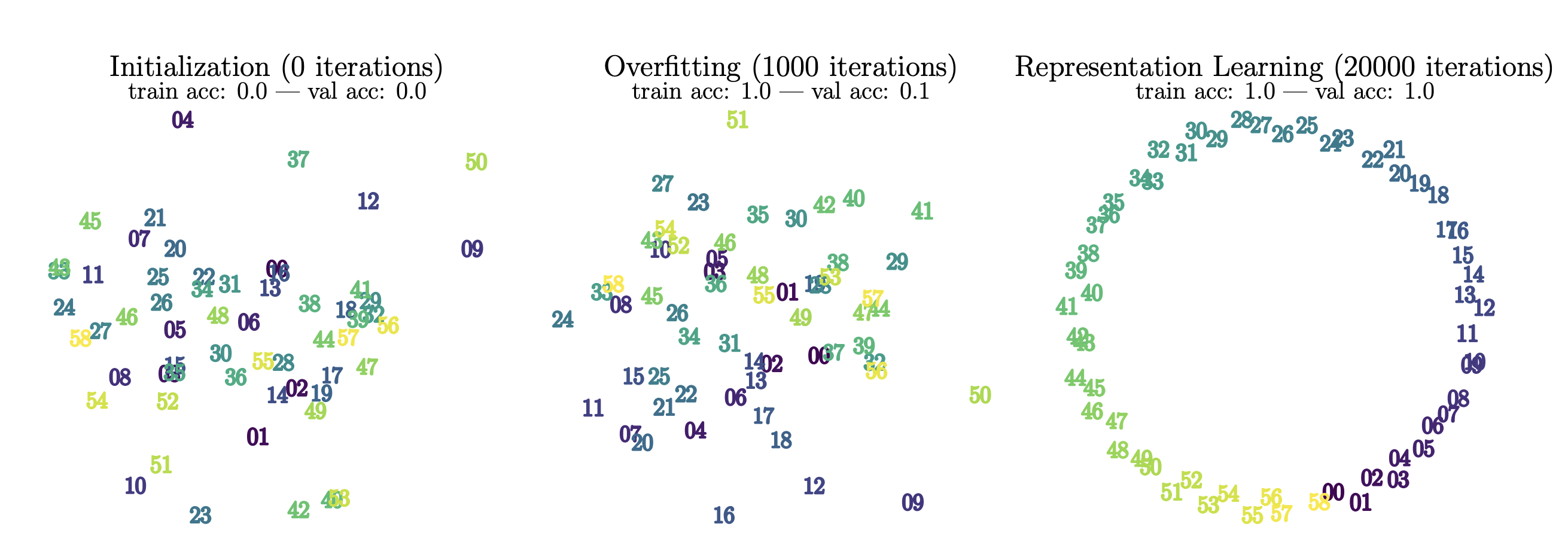

1. Physics of AI. Understanding AI from physical principles: "AI as simple as physics";

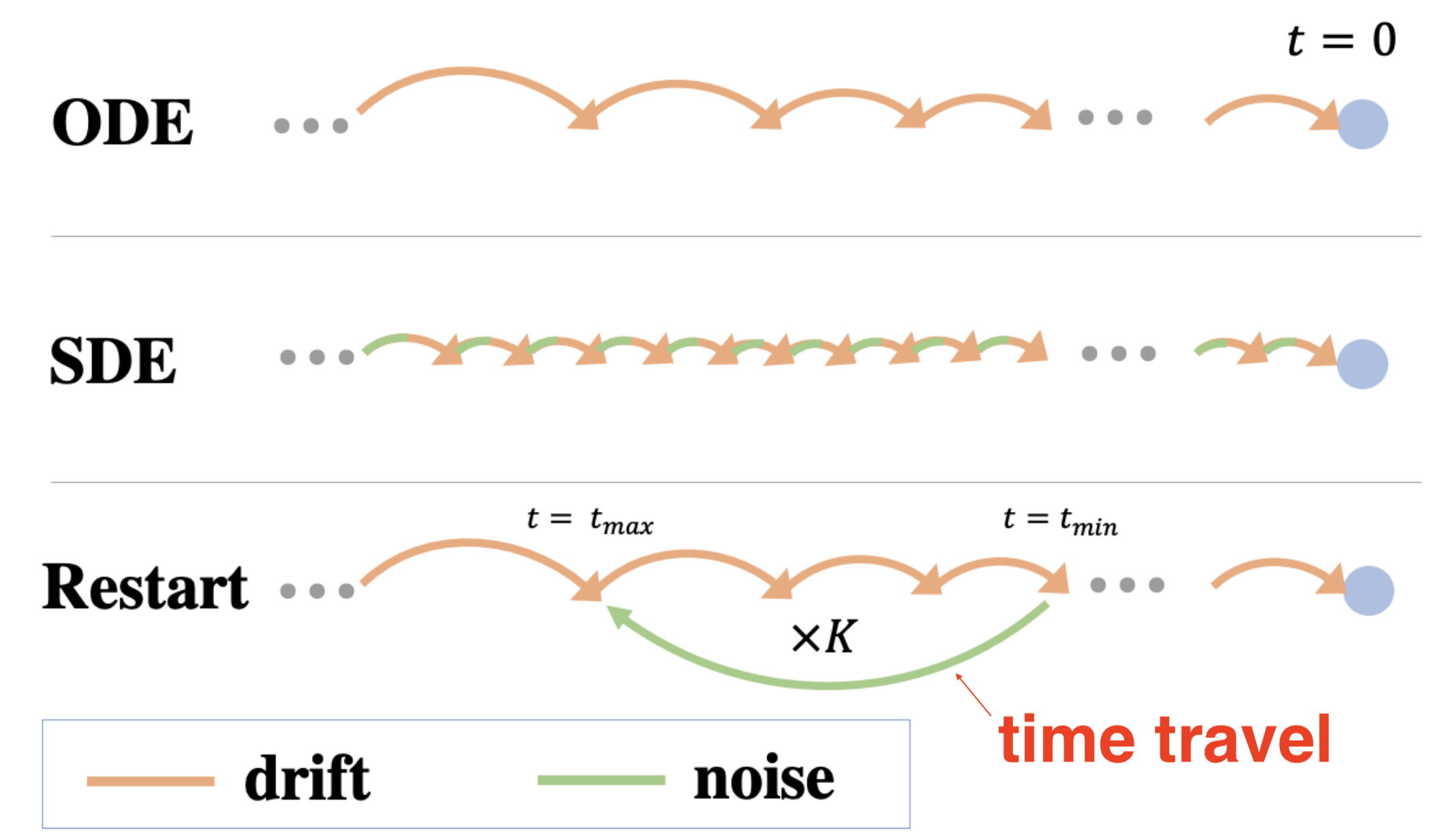

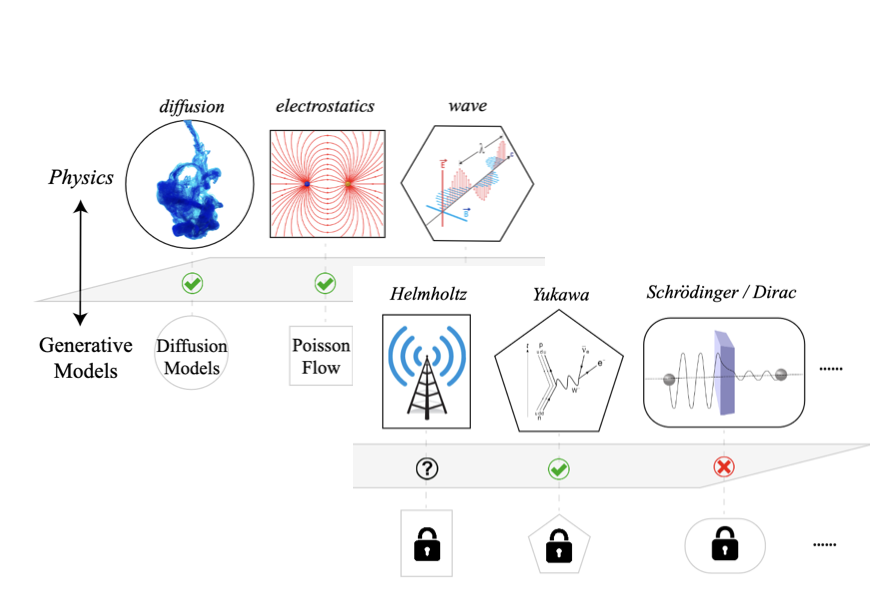

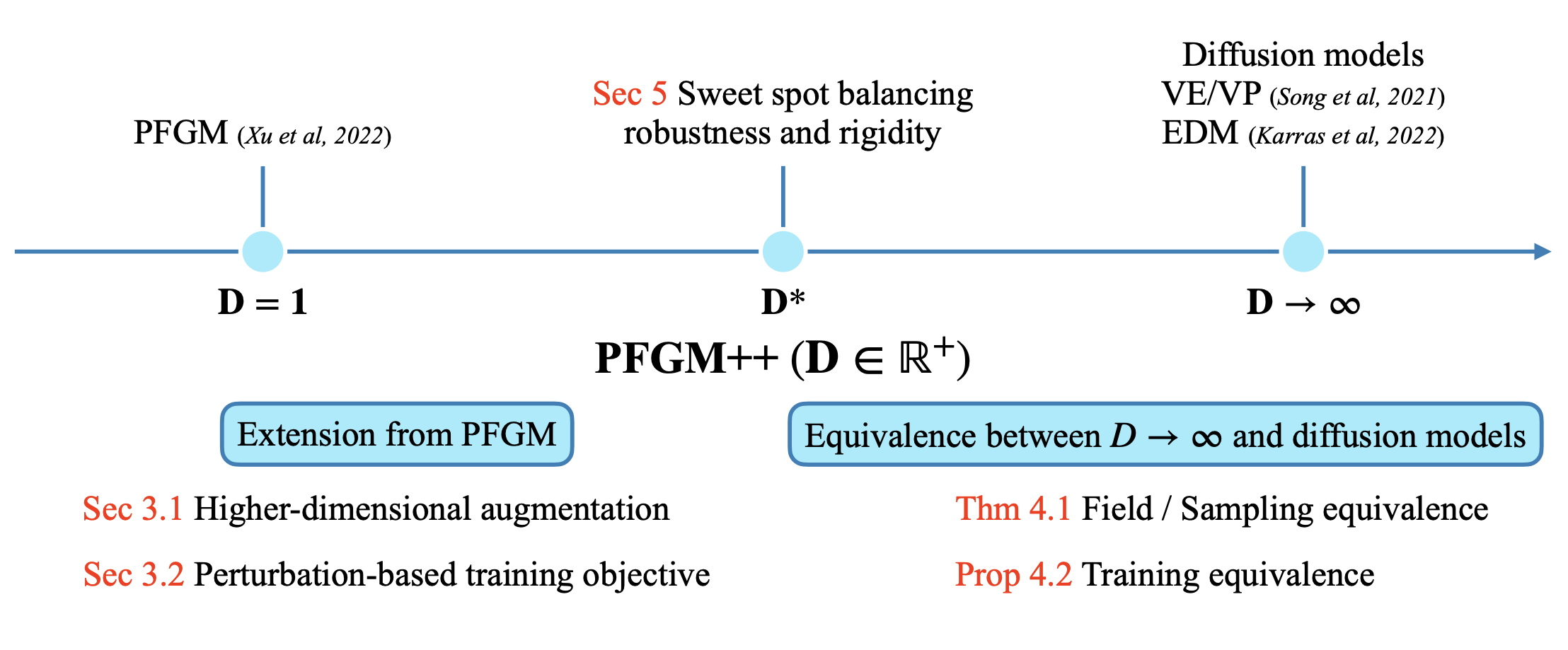

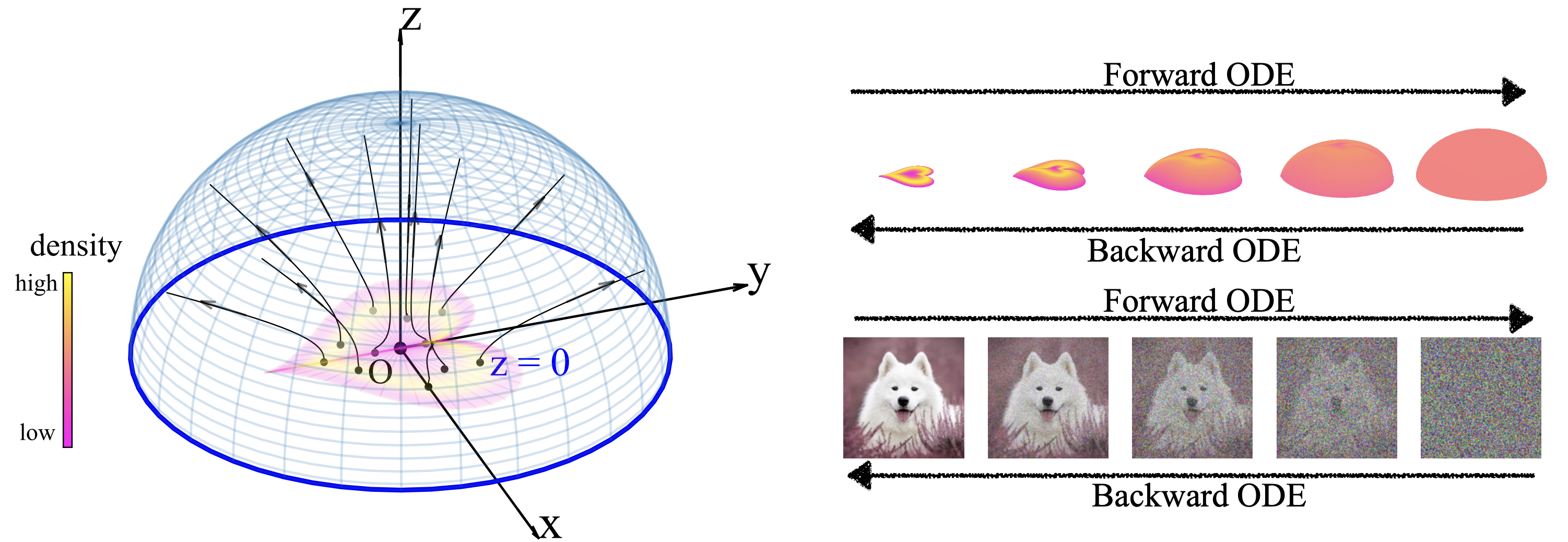

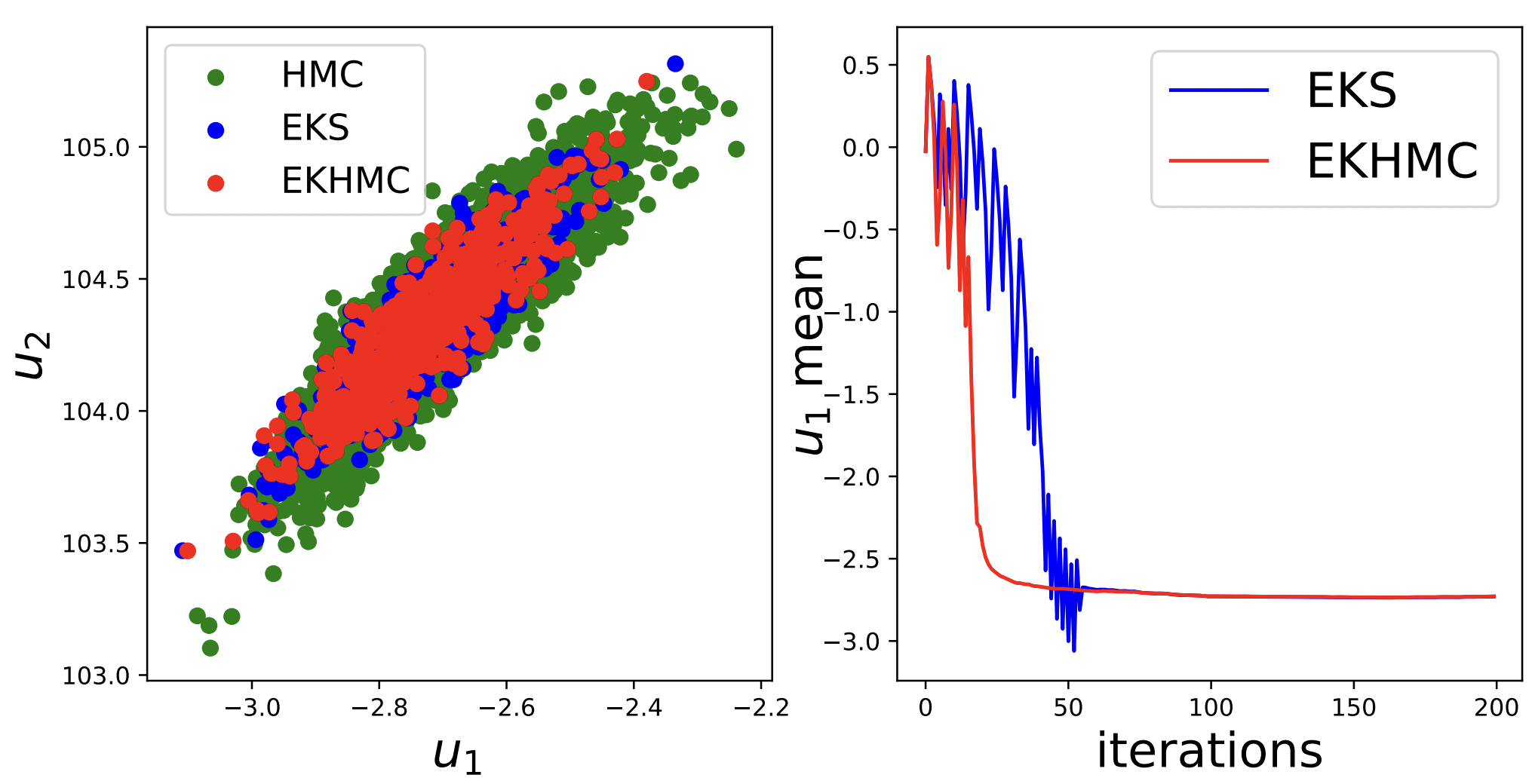

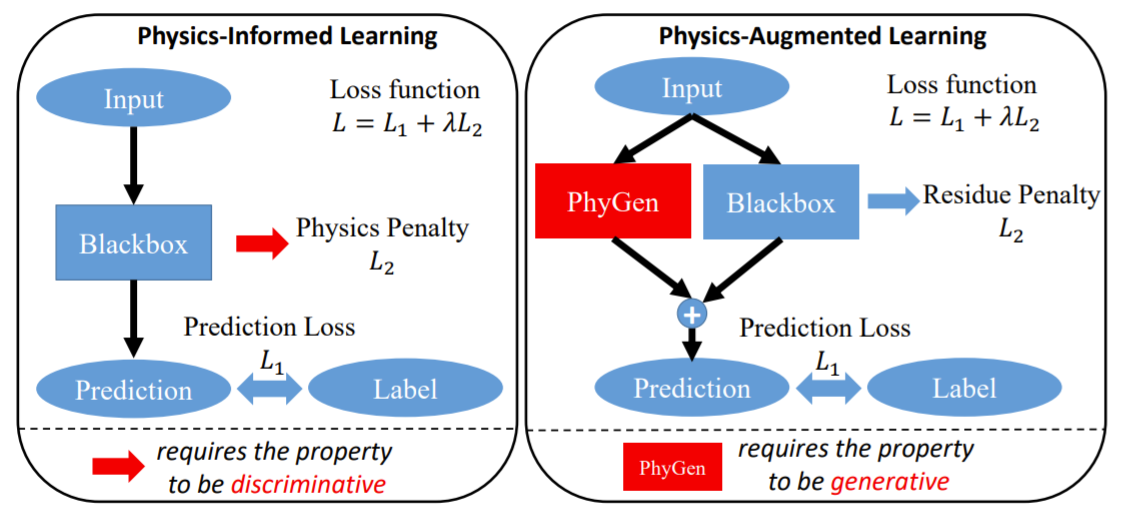

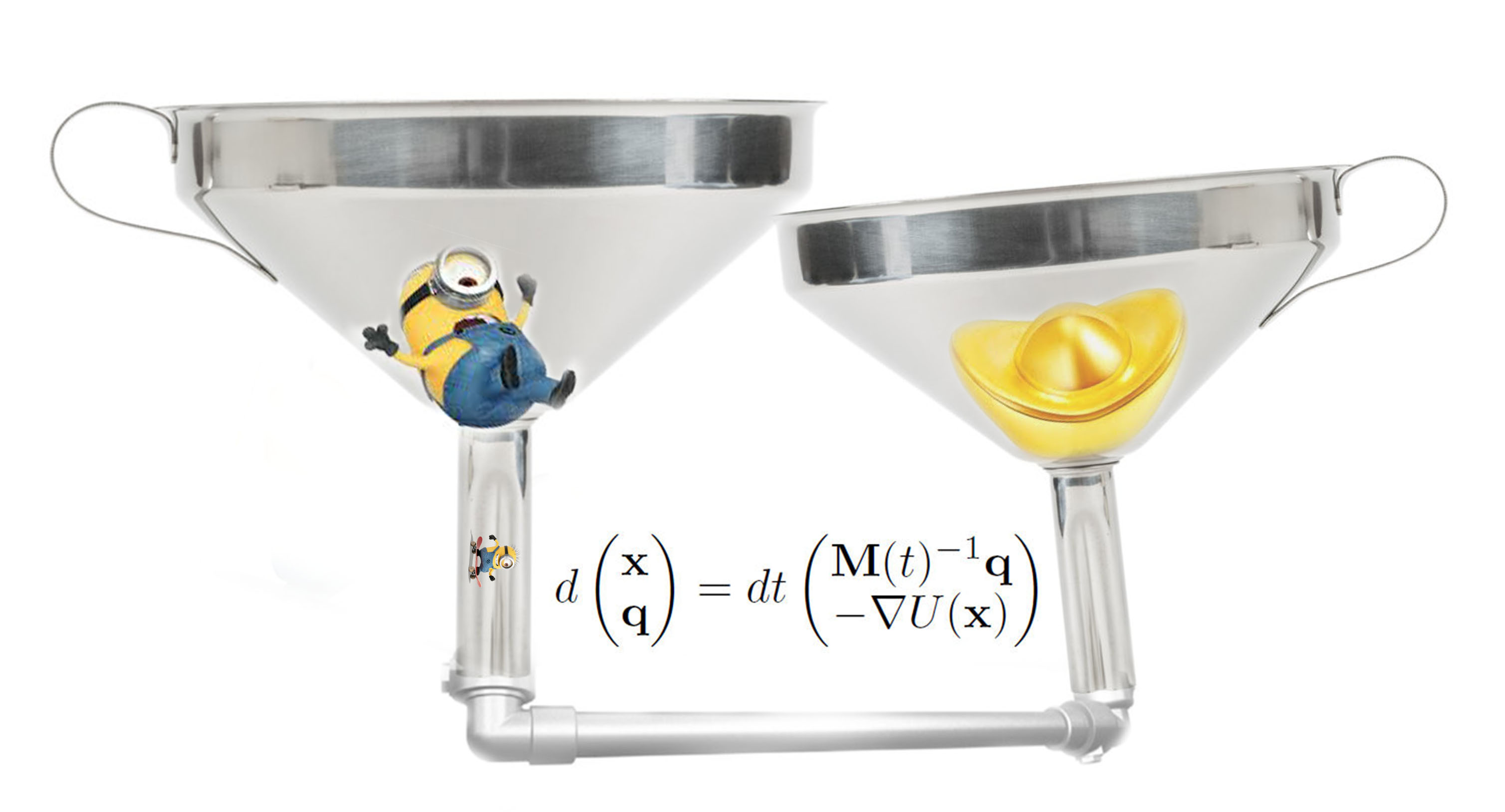

2. Physics for AI. Physics-inspired AI: "AI as natural as physics";

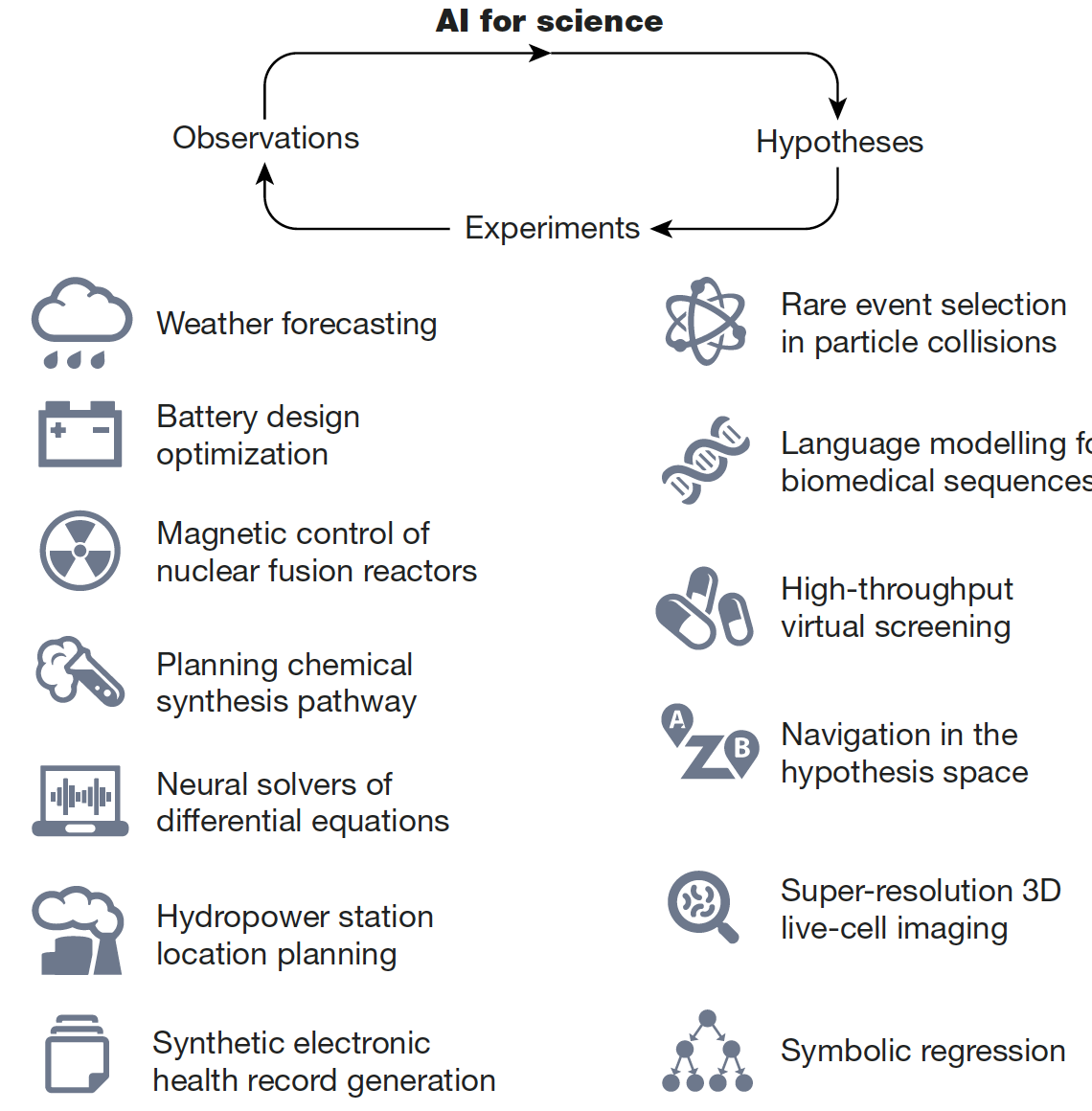

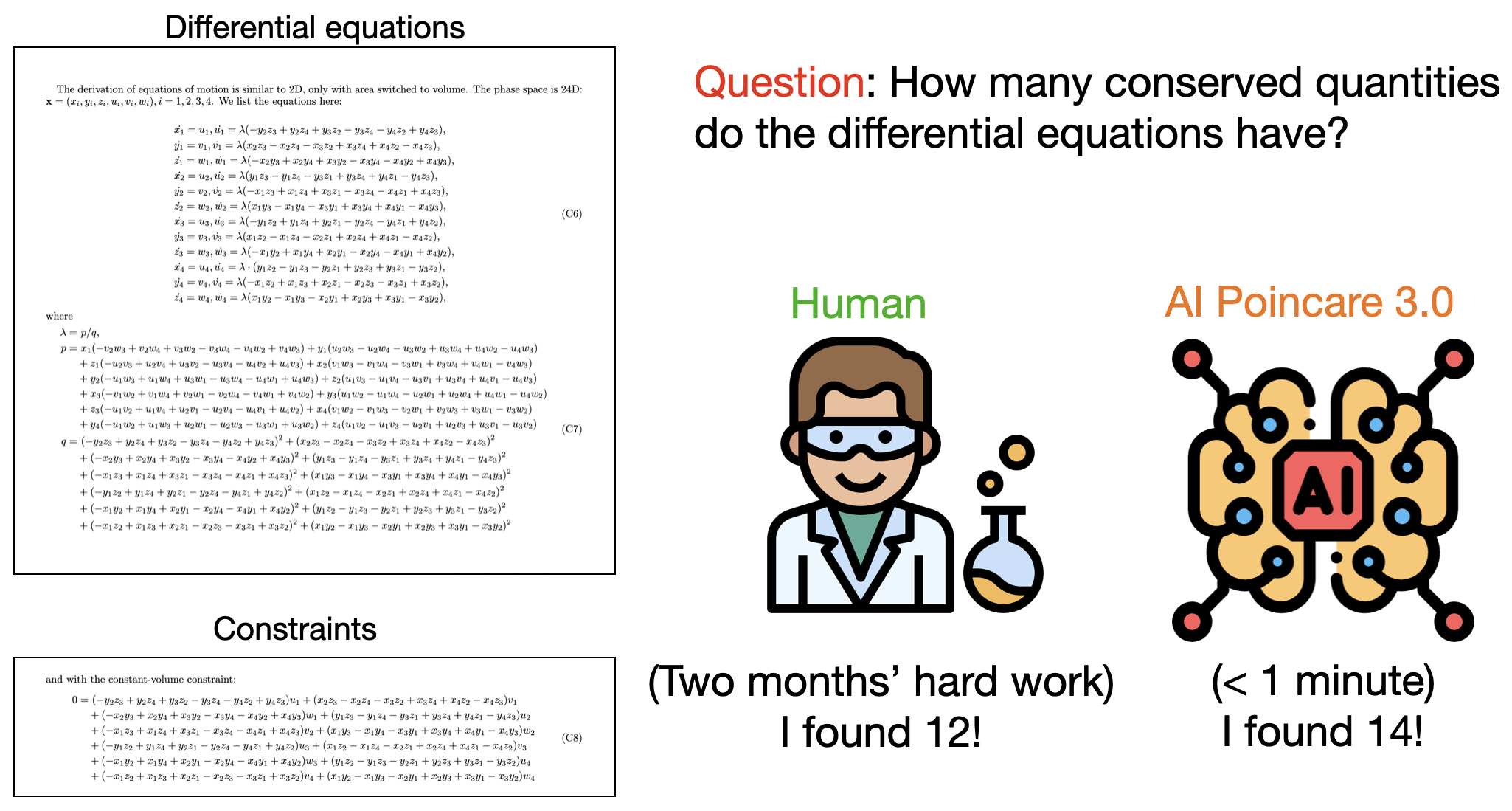

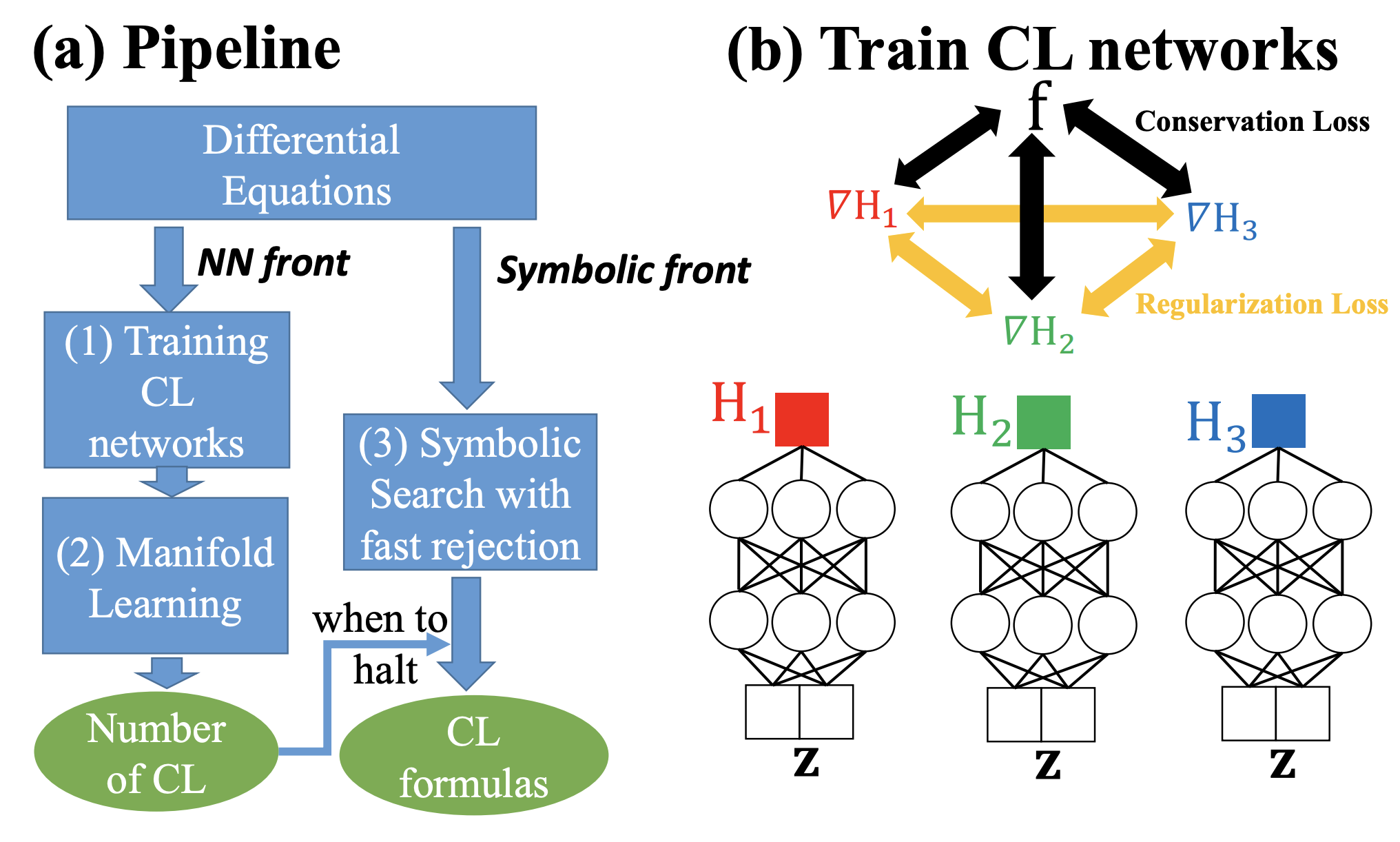

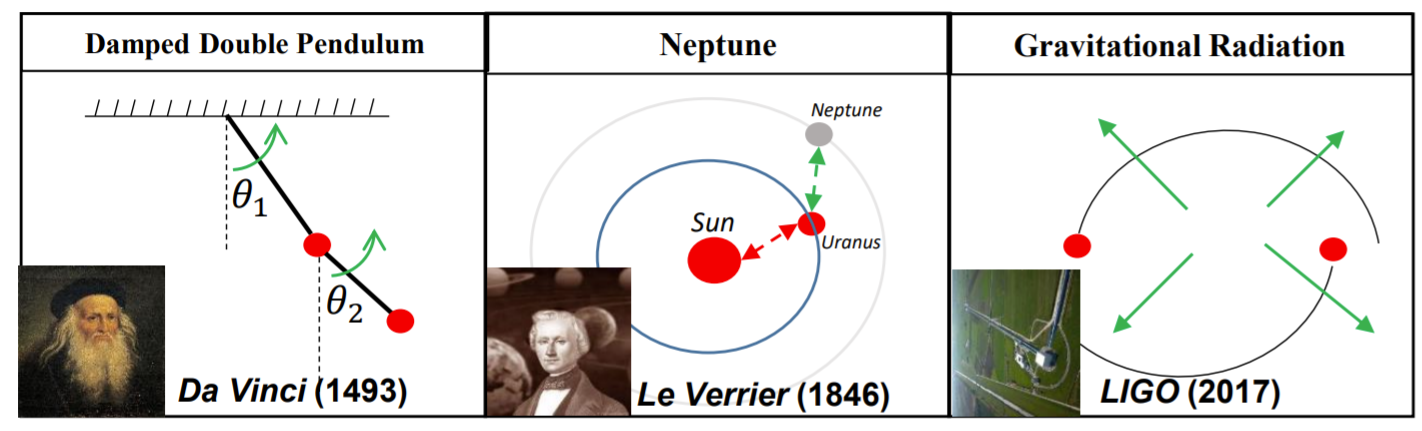

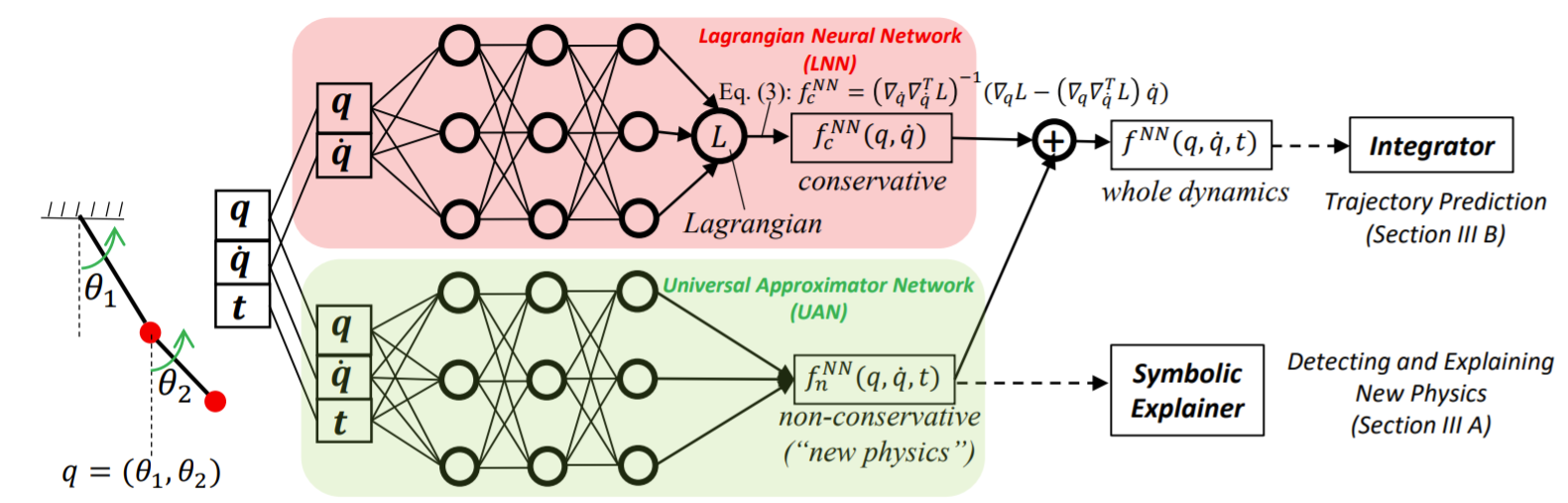

3. AI for physics. Boosting physics with AI: "AI as powerful as physicists".

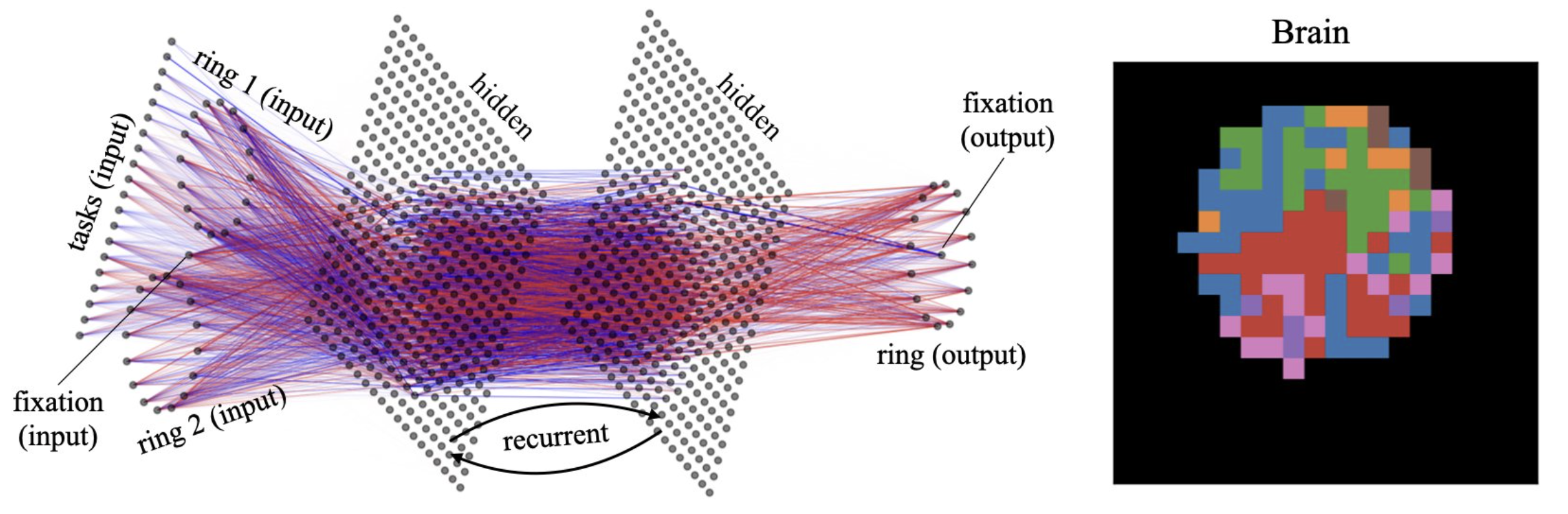

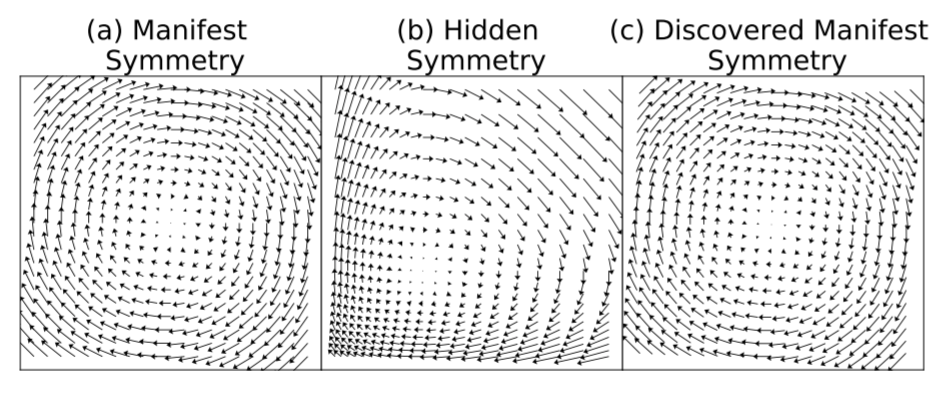

Serving the ultimtate goal of building a better world using AI + Physics, I have interests in a broad range of topics, including but not limited to discovering physical laws, physics-inspired generative models, machine learning theory, mechanistic interpretability, etc. I have formed close collaboration not only with physicists (condensed matter/high energy/quantum computation), but also with computer scientists, biologists, neuroscientists, climate scientists... Because I appreciate the merit of interdisciplinary collaboration. I give talks at many venues and my works have been covered by top media. I publish papers both in top physics journals and AI conferences. I serve as a reviewer for IEEE, Physcial Reviews, NeurIPS, ICLR, etc. I co-organized the AI4Science workshop at NeurIPS2021 and ICML2022.

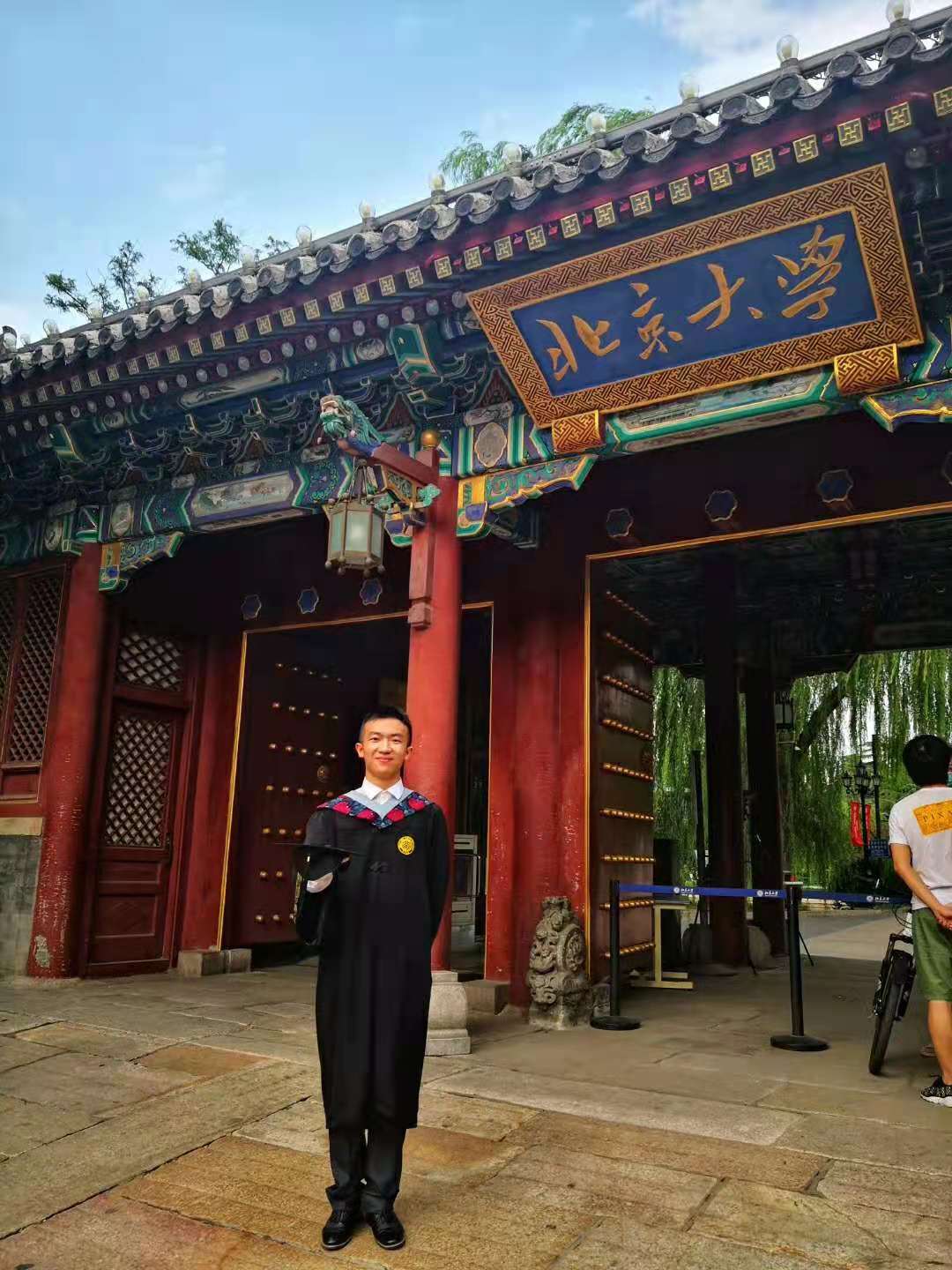

Before my PhD, I interned at Microsoft Research Asia. Before that, I obtained my B.S. from school of physics in Peking University. Before that, my memory is sealed in my hometown, Wuhan, China.